Introduction

When a CNC machine drifts by even a fraction of a millimeter, every part it produces inherits that error—compounding across hundreds or thousands of production runs. Measurement accuracy is the backbone of manufacturing quality, yet it rarely gets attention until a batch failure, customer complaint, or costly recall exposes how far things have drifted.

Calibration techniques go beyond routine maintenance. They represent a systematic process of comparing, verifying, and adjusting measurement equipment against trusted standards — so quality problems get caught before they become production problems.

For manufacturing facilities in Portland OR and SW Washington, that distinction matters. Unchecked equipment drift translates directly into scrapped parts, rework labor, and schedule delays. This article covers the core calibration methods, what each one measures, and how to apply them effectively across CNC and precision manufacturing environments.

TL;DR

- Calibration compares instruments to known standards and corrects deviations; it is not the same as inspection or testing

- Accurate calibration prevents defective output, unplanned downtime, and costly rework in CNC and precision manufacturing

- The process follows defined stages: scope definition, standard selection, measurement, evaluation, and documentation

- Industry standards like NIST traceability and the 4:1 (or 10:1) accuracy ratio govern valid calibration

- Documented, recurring calibration protects production margins and catches errors before they become failures

What Is Calibration — And How Does It Differ from Inspection and Testing?

Calibration compares a measuring instrument's output against a traceable reference standard, then documents — or corrects — any deviation found. Put simply, it answers the question: how far off is this instrument, and by exactly how much? (VIM 2.39 defines this as establishing the relation between instrument indications and known quantity values, each with stated measurement uncertainties.)

The Critical Distinctions

Calibration, inspection, and testing serve different roles in quality assurance:

- Calibration verifies and corrects instrument accuracy — every inspection and test result is only as reliable as the tools behind it

- Inspection evaluates whether a product or process conforms to specified requirements

- Testing confirms functional performance through pass/fail criteria

A fourth activity — verification — is often confused with calibration. Checking a caliper against a gage block confirms it reads within tolerance, but it doesn't establish traceability or document expanded uncertainty. That distinction matters when a customer or auditor asks you to prove your measurements are defensible.

Types of Manufacturing Calibration

Once you understand what calibration covers versus inspection and testing, the next step is knowing which type applies to your equipment. Manufacturing environments typically involve:

- Mechanical calibration — dimensional measurements, torque verification

- Electrical calibration — voltage, current, resistance verification

- Temperature calibration — sensors, thermocouples, environmental chambers

- Pressure calibration — gauges, transducers, regulators

- Flow calibration — meters, controllers, dispensing systems

Why Calibration Is Critical in Manufacturing Operations

The Compounding Error Problem

A measurement instrument even slightly out of tolerance produces flawed data on every part it measures. This error goes undetected until a batch failure, customer complaint, or recall reveals the scope of the problem. Unplanned downtime costs industrial manufacturers up to $852 million every week, with manufacturing operations reporting losses between $200,000 and $260,000 for each hour production lines remain idle.

Calibration drift transforms reliable CNC machines into sources of scrap parts. As drift continues, scrap rates climb while first-pass yield percentages decline, triggering emergency line stops and missed delivery commitments.

Direct Support for Production Efficiency

When instruments are verified accurate, operators can trust readings, reduce adjustment cycles, and minimize rework—connecting calibration directly to throughput and labor cost savings. Best-in-Class manufacturers spend 38% less annual revenue on their total cost of quality by using effective quality management programs that include proactive calibration.

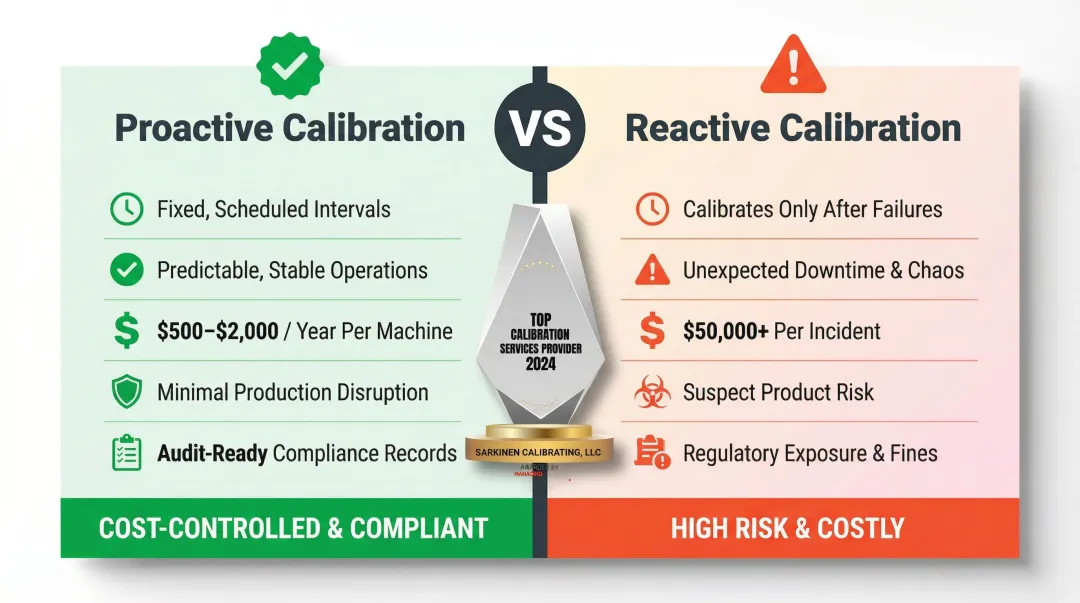

The cost difference is stark: preventive calibration runs $500 to $2,000 per machine annually. An emergency response—combined with scrap losses and customer penalties—routinely exceeds $50,000 per incident.

New Machine Verification Window

Calibrating equipment while still under warranty allows facilities to confirm the machine meets promised specifications before warranty coverage expires. Shipping and installation often introduce alignment errors or hidden damage. This verification window cannot be recovered once passed, making early calibration a critical risk management step.

That same discipline—catching issues before they compound—is what separates proactive calibration programs from reactive ones.

Proactive vs. Reactive Calibration

Facilities that calibrate on a fixed, documented schedule experience predictable operations and minimal disruption. Those that only calibrate after problems surface face unexpected downtime, suspect product, and regulatory exposure.

Optimizing calibration intervals using data analytics delivers an ROI of 126% over five years by reducing direct calibration costs, device de-installation efforts, and plant downtime.

Compliance and Customer Confidence

Many manufacturing customers and quality certifications require documented, traceable calibration records as proof that equipment can deliver consistent output. Documented calibration records support audit readiness, satisfy customer requirements, and demonstrate that your machines produce to spec—consistently, not just on a good day.

How the Calibration Process Works — Step by Step

Step 1 – Define Scope and Equipment Selection

Identify which instruments require calibration—all devices used to make accept/reject decisions on product. Determine the parameters to be measured and the required accuracy limits for each. Clarity here determines what standards and methods will be needed downstream.

Focus on instruments that directly impact product conformity:

- CNC machine axes (linear and rotary positioning)

- Measurement gages (calipers, micrometers, height gages)

- Pressure and temperature sensors

- Torque tools and force measurement devices

Step 2 – Select the Reference Standard

The reference standard must be significantly more accurate than the instrument being calibrated. The 4:1 to 10:1 accuracy ratio rule requires the standard to be at least four times (preferably ten times) more accurate than the specification being verified.

Standards must be traceable to NIST or recognized international standards to produce valid, auditable results. VIM 2.41 defines metrological traceability as "the property of a measurement result whereby the result can be related to a reference through a documented unbroken chain of calibrations, each contributing to the measurement uncertainty."

Step 3 – Prepare the Environment and Equipment

Pre-calibration requirements include:

- Environmental controls — ISO 1:2016 defines the standard reference temperature as exactly 20°C (68°F) for dimensional measurements

- Adequate warm-up time — instruments require stabilization before measurement

- Preliminary examination — inspect for visible damage or signs of wear

Skipping this stage is a common cause of invalid results discovered only later. Failing to control temperature causes thermal expansion errors; typical steel measuring equipment expands approximately 11.6 µm/m/°C, creating significant dimensional errors in tight-tolerance work.

Step 4 – Perform the Calibration Measurement

Compare the instrument's readings against the reference standard across the relevant range of values. Record "as-found" readings before any adjustment is made. This before-and-after documentation is essential for traceability and detecting drift patterns over time.

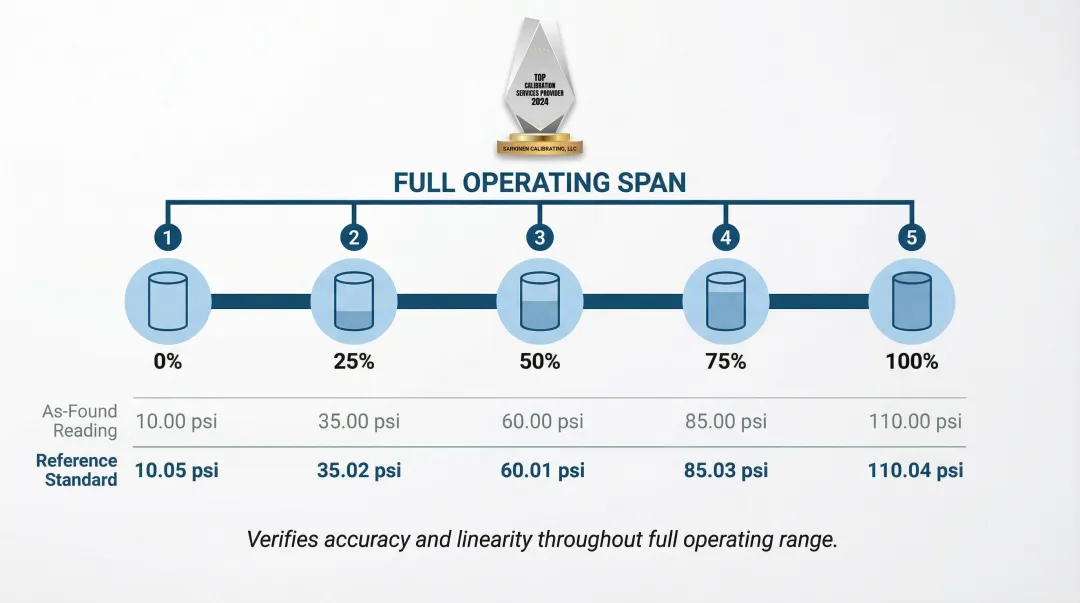

For example, a 5-point calibration involves measuring an instrument's output at five evenly spaced points across its full operating range (typically 0%, 25%, 50%, 75%, and 100% of span) to verify accuracy and linearity throughout—rather than just at a single reference point.

Step 5 – Evaluate Results and Adjust

With as-found data in hand, assess results against defined tolerance or uncertainty limits:

- Within tolerance — confirm and tag the instrument for continued use

- Out of tolerance — adjust, re-measure, and assess whether any previously measured product was affected

Document the deviation magnitude and direction. Consistent drift patterns indicate predictable wear requiring more frequent calibration intervals or equipment replacement.

Step 6 – Document, Label, and Schedule the Next Calibration

A valid calibration record must contain:

- Equipment identification and serial number

- Calibration date and technician name

- Standard used with its traceability reference

- As-found and as-left readings

- Uncertainty limits and tolerance specifications

- Next calibration due date

Apply a physical tag or label on the instrument. ISO/IEC 17025:2017 Clause 7.8.4 mandates that calibration certificates include measurement uncertainty, environmental conditions, and a statement identifying metrological traceability — records missing these elements are not auditable by customers or third-party auditors.

Key Calibration Standards, Rules, and Measurement Ratios

The 4:1 and 10:1 Calibration Rules

The Test Accuracy Ratio (TAR) originated in mid-20th century military standards. The 4:1 rule requires the reference standard used during calibration to be at least four times more accurate than the specification being verified—ensuring measurement uncertainty doesn't compromise the validity of the calibration result.

Many industry benchmarks recommend pushing this to 10:1 where possible. Sarkinen Calibrating's linear calibration measurements exceed the standard 10:1 ratio, achieving accuracy to 1.0 parts per million (one millionth of an inch per inch) with NIST-traceable standards.

That said, legacy TAR is inadequate for modern precision manufacturing — it only compares accuracy tolerances and ignores environmental factors, equipment repeatability, and human error.

Modern facilities should adopt the Test Uncertainty Ratio (TUR) as defined by ANSI/NCSL Z540.3, which compares the tolerance span to twice the 95% expanded uncertainty of the measurement process.

NIST Traceability and Why It Matters

NIST-traceable calibration means every reference standard in the calibration chain can be traced back to measurements maintained by the National Institute of Standards and Technology. This creates an unbroken, documented link to nationally recognized accuracy — making results defensible to customers and auditors alike.

According to NIST policy, traceability is a property of the measurement result, not the laboratory or instrument itself. Many shops claim their lab is "NIST Traceable," but valid traceability requires complete documentation of the unbroken chain and expanded uncertainties on every calibration certificate.

Precision vs. Accuracy

These terms have distinct meanings in calibration standards:

- Precision is the repeatability of a result—getting the same reading consistently

- Accuracy is how close the reading is to the true value

Both must be verified. A precise but inaccurate instrument is still producing wrong data. For example, a CNC machine that repeatedly positions to 10.005" when commanded to 10.000" is precise (repeatable) but inaccurate (offset by 0.005"). Calibration must correct both the offset and verify repeatability.

Calibration Frequency Considerations

Intervals are driven by:

- Instrument type and manufacturer recommendations

- Usage intensity and environmental conditions

- Risk assessment and criticality to product quality

They are not arbitrary time windows. An instrument that drifts out of tolerance halfway through a 12-month cycle may have produced months of suspect product before the next check catches it. Common intervals range from monthly to annually, but the key is establishing a documented schedule based on risk assessment rather than defaulting to generic time windows.

How Sarkinen Calibrating Can Help

Sarkinen Calibrating approaches calibration from an operations-first perspective. Founded by Larry, a former machine operator, the company goes beyond tolerance checks — identifying how calibration solutions can improve production quality and efficiency for manufacturing facilities in Portland OR and SW Washington.

Practical Advantages of a Local Partner

Working with a local precision calibration provider delivers tangible benefits:

- Faster response times for Portland OR and SW Washington facilities compared to out-of-area providers

- NIST-traceable measurements exceeding standard accuracy ratios (1.0 parts per million for linear calibration)

- Predictable maintenance schedules that eliminate unexpected downtime

- Operations experience from a founder who understands production realities

Sarkinen Calibrating's services include linear axis calibration using Renishaw's Laser Interferometer, ballbar testing for machine condition monitoring, rotary axis calibration, surface plate leveling and flatness measurement, and machine level and alignment services.

Building a Calibration Partnership

Consistent calibration is an ongoing process — one that protects quality, prevents downtime, and gives customers confidence that machines will perform as promised. Portland OR and SW Washington manufacturers can contact Sarkinen Calibrating at (360) 907-3058 to discuss their needs and establish a predictable maintenance program built around their production schedule.

Frequently Asked Questions

How do you measure calibration?

Calibration is measured by comparing an instrument's output to a known reference standard across its operating range, recording the deviation at each point, and determining whether the deviation falls within acceptable tolerance limits defined by the accuracy specification.

What is the 4 to 1 calibration rule?

The 4:1 rule (Test Accuracy Ratio) requires the reference standard used during calibration to be at least four times more accurate than the specification being verified. This ensures measurement uncertainty doesn't compromise the validity of the calibration result.

What is the 5 point calibration test?

A 5-point calibration involves measuring an instrument's output at five evenly spaced points across its full operating range (typically 0%, 25%, 50%, 75%, and 100% of span) to verify accuracy and linearity throughout.

What are the 5 requirements for a calibration standard?

A valid calibration standard must meet five conditions:

- More accurate than the instrument being calibrated (typically 4:1 or better)

- Traceable to NIST or international standards

- Used within a controlled environment

- Maintained on its own calibration schedule

- Accompanied by a valid certificate documenting traceability and uncertainty

How often should equipment be calibrated?

Calibration frequency depends on instrument type, usage intensity, manufacturer recommendations, environmental conditions, and risk level. Common intervals range from monthly to annually, but the key is establishing a documented schedule based on risk assessment rather than following arbitrary fixed intervals.

What is NIST-traceable calibration?

NIST-traceable calibration means every reference standard in the calibration chain can be traced back to measurements maintained by the National Institute of Standards and Technology. This unbroken, documented chain makes results defensible to customers and auditors alike.