Introduction

When a machined part fails inspection or a production run gets scrapped, the root cause often traces back to test and measurement equipment that isn't performing accurately—and most manufacturers don't realize it until they've already lost time and money. A single miscalibrated micrometer can systematically produce out-of-spec parts, creating a false sense of security because the readings appear repeatable. Industry data suggests poor calibration costs large manufacturers an average of $4,000,000 annually, with stopped production reaching up to $22,000 per minute in some sectors.

Test and measurement (T&M) in manufacturing means using instruments and procedures systematically to verify that parts, machines, and processes meet defined specifications.

This post covers what every production facility needs to understand:

- The four types of measurement scales

- Common T&M techniques used on the shop floor

- Critical equipment categories and what they measure

- The difference between accuracy and precision

- Why regular calibration keeps your measurement systems trustworthy

TLDR

- T&M verifies that manufactured parts and machines meet specifications, forming the backbone of quality control

- Dimensional measurements (millimeters, PSI, voltage) give you the precise, comparable data that makes quality control actionable

- Essential equipment includes dimensional hand tools, CMMs, pressure gauges, and electrical test instruments

- Precision and accuracy aren't the same thing — you need both: consistency in your results and correctness against a known standard

- Regular NIST-traceable calibration catches measurement drift before it becomes scrapped parts, warranty claims, or unplanned downtime

What Is Test and Measurement in a Manufacturing Context?

Test and measurement is the systematic process of using instruments and standardized procedures to evaluate whether a product, component, or machine meets a defined specification. In manufacturing, this process delivers the objective data needed to accept or reject parts, verify process capability, and keep customers confident in your output.

Testing vs. Measurement: Related but Distinct

Testing checks whether something works or meets pass/fail criteria—does this hydraulic valve hold 3,000 PSI without leaking? Measurement quantifies a physical property against a standard—what is the actual bore diameter of this cylinder in millimeters? On the production floor, these activities work together: you measure the diameter, then test whether that measurement falls within the tolerance band specified on the engineering drawing.

Core Objectives in Production Environments

T&M serves four essential purposes:

- Ensuring part conformance to engineering drawings and customer specifications

- Preventing defective output from reaching customers and triggering warranty claims

- Verifying new equipment meets promised specifications while still under warranty

- Identifying process drift before it becomes a costly scrap and rework problem

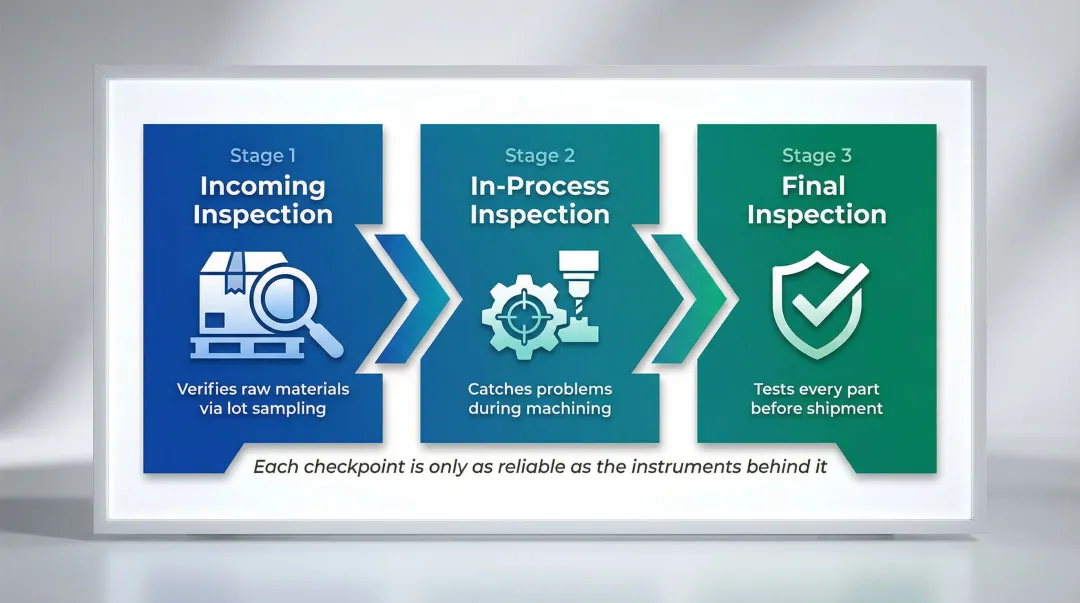

Where T&M Fits in the Workflow

Quality systems rely on a multi-stage inspection approach. Each stage serves a distinct purpose:

- Incoming inspection — Verifies raw materials before they enter production, typically using lot sampling to catch supplier defects early.

- In-process inspection — Catches problems during machining before hundreds of parts are affected.

- Final inspection — The tightest checkpoint, where many manufacturers test every single part before shipment.

Each of these checkpoints is only as reliable as the instruments behind it. An out-of-calibration caliper at incoming inspection lets defective material into your process; the same problem at final inspection ships bad parts to customers.

The Four Types of Measurement Explained

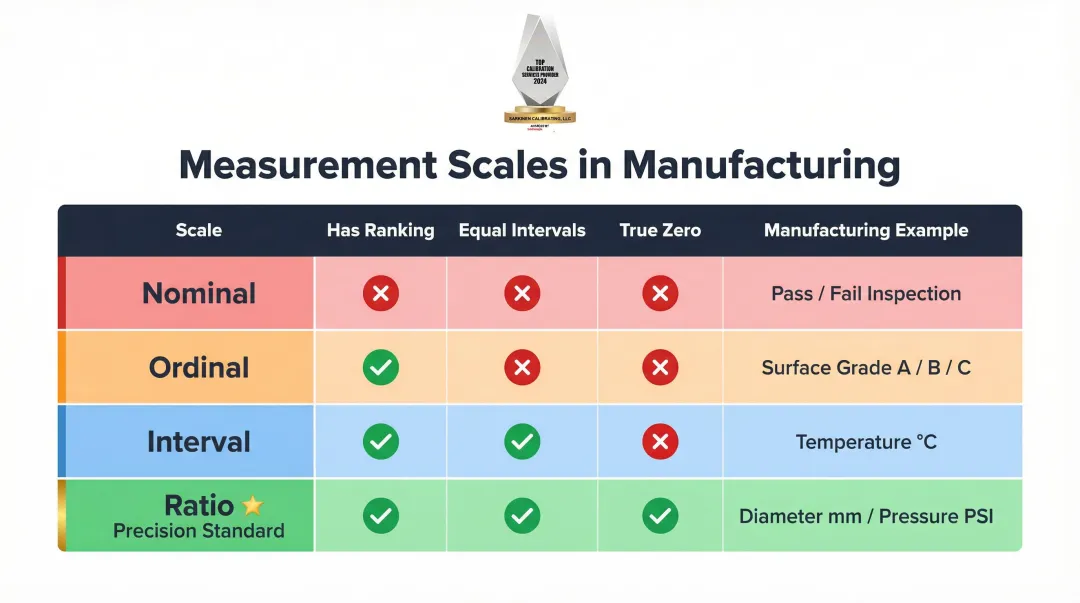

Understanding the four scales of measurement—nominal, ordinal, interval, and ratio—determines how you can use and analyze quality data. Applying the wrong statistical method to a measurement type produces meaningless results and false conclusions.

Nominal Measurement: Categories Without Ranking

Nominal measurement groups items into categories — no ranking, no numbers. Labeling a part as "pass" or "fail," categorizing defect types (scratch vs. dent), or identifying material grades are all nominal. This data tells you what something is, not how much. You can count frequencies and identify the most common category (mode), but operations like averages are meaningless here.

Ordinal Measurement: Ranking Without Consistent Intervals

Ordinal scales rank items in order, but the gaps between values aren't consistent. Surface finish visual grades (A/B/C) and defect severity rankings work this way — Grade A might be significantly better than B, while B and C are nearly identical. The intervals aren't equal. Ordinal data supports comparative decisions but doesn't hold up under precise calculations.

Interval and Ratio Measurement: The Foundation of Precision Manufacturing

Interval scales have consistent spacing between values but no true zero — temperature in Celsius is one example. Ratio scales add a true zero to the mix, which enables the full range of mathematical operations. Dimensions in millimeters, pressure in PSI, and torque in newton-meters are all ratio measurements.

These two scales are the workhorses of precision manufacturing. When you measure shaft diameter with a micrometer or verify hydraulic pressure with a gauge, you're working with ratio data. That data can be averaged, charted, and analyzed to catch process drift before it produces defective parts.

Here's how the four scales compare at a glance:

| Scale | Ranking | Equal Intervals | True Zero | Example |

|---|---|---|---|---|

| Nominal | No | No | No | Pass/Fail, defect type |

| Ordinal | Yes | No | No | Surface finish grade (A/B/C) |

| Interval | Yes | Yes | No | Temperature (°C) |

| Ratio | Yes | Yes | Yes | Diameter (mm), pressure (PSI) |

Common Test and Measurement Techniques Used in Production

Dimensional Measurement: Direct vs. Indirect

Direct measurement uses an instrument's own scale to determine dimensions—placing a caliper on a part and reading the dimension directly. Indirect (comparative) measurement determines the difference between a target and a reference standard—using a dial indicator zeroed against a gauge block to check part variation.

| Technique | Instruments | Advantages | Limitations |

|---|---|---|---|

| Direct | Calipers, micrometers, CMMs | Wide measurement range | Susceptible to scale reading errors |

| Indirect | Dial gauges, comparators | Highly accurate for specific tolerances | Strictly limited measurement range |

Indirect measurement excels in high-volume production where a master gauge sets the zero point, minimizing operator-induced variation.

Functional Testing

Functional testing verifies that a component performs as intended under operating conditions. Examples include pressure-testing a hydraulic component to 4,000 PSI, running a CNC axis through its full range of motion to check for binding, or verifying electrical continuity in a wiring harness.

This differs from dimensional inspection. A part can meet all dimensional specifications but still fail functionally if material properties or assembly issues create performance problems.

Statistical Process Control as a Measurement Strategy

SPC uses repeated measurements over time to detect when a process drifts out of tolerance before defective parts are produced. Rather than inspecting finished parts and scrapping rejects, SPC monitors the process itself and triggers corrective action at the first sign of drift.

Implementing SPC and the 7 Basic Quality Tools reduced paint shop defects by 70% in one automotive factory and cut out-of-spec machined parts by 62% in a precision machining operation. What drives those results:

- Measurement frequency: Higher sampling rates catch drift earlier, before nonconforming parts are produced

- Consistency: Automated, in-line systems remove operator-to-operator variation from the data

- Real-time feedback: Operators see process shifts as they happen and can correct before scrap accumulates

Essential Test and Measurement Equipment in Manufacturing

Dimensional Hand Tools

Hand tools are the most common entry-level T&M instruments in any machine shop, but operators must understand the difference between display resolution and actual accuracy.

| Tool Type | Typical Resolution | Typical Accuracy | Common Use |

|---|---|---|---|

| Digital Calipers | 0.0005" / 0.01 mm | ±0.001" / ±0.02 mm | General dimensional inspection |

| Outside Micrometers | 0.00005" / 0.001 mm | ±0.0001" / ±0.002 mm | Precision diameter measurement |

| Dial Indicators | 0.001" / 0.01 mm | ±0.001" / ±15 μm | Runout and comparative measurement |

| Height Gauges | 0.0005" / 0.01 mm | ±0.0015" / ±0.04 mm | Vertical dimension verification |

Accuracy degrades over longer measurement lengths—a caliper accurate to ±0.02 mm at 50 mm may degrade to ±0.04 mm at 300 mm. All specifications apply at a reference temperature of 20°C.

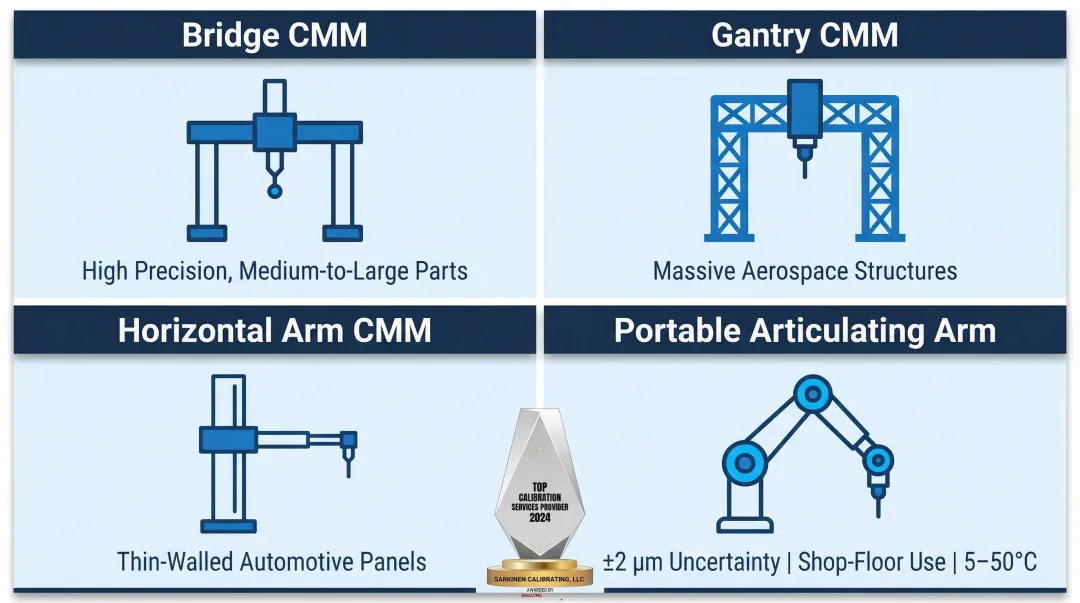

Coordinate Measuring Machines (CMMs)

CMMs use a probe to map the 3D geometry of a part and compare it against a CAD model or drawing. They measure complex parts with tight tolerances and can reach features that handheld tools cannot access or resolve accurately.

Historically isolated in climate-controlled metrology rooms, modern CMMs are increasingly hardened for shop-floor environments. Each CMM type is built around a specific application:

- Bridge CMMs — high precision for medium-to-large parts

- Gantry CMMs — built to handle massive aerospace structures

- Horizontal arm CMMs — excel at thin-walled automotive panels

- Portable articulating arms — provide ±2 μm uncertainty comparative gauging directly in the CNC cell, operating in temperatures from 5 to 50°C

Industry surveys indicate that 19% of manufacturers plan to purchase a CMM, with many budgeting over $100,000 for the equipment.

Pressure and Force Measurement Instruments

Pressure gauges, load cells, and torque wrenches with measurement capability verify machine output forces, clamping pressures, and hydraulic or pneumatic system performance. For facilities running automated or high-force machining, an unchecked measurement error here carries real consequences.

Implementing reliable torque measurement tools has been shown to reduce assembly errors by 25% in automotive manufacturing, cutting warranty claims. A single miscalibrated torque wrench can create severe safety risks—in one documented case, a torque wrench preset to 150 ft-lbs instead of the required 250 ft-lbs nearly caused wheel separation on an F-16 during taxi testing.

Electrical and Signal Measurement Tools

Beyond mechanical measurement, CNC-driven facilities depend on electrical T&M instruments to verify control signals, power supply stability, and encoder feedback. Three tools handle most of this work:

- Multimeters — verify voltage, current, and resistance in control circuits

- Oscilloscopes — capture signal timing and waveform anomalies that cause intermittent machine behavior

- Signal analyzers — validate servo performance and troubleshoot communication errors between controllers and drives

These instruments are frequently absent in mechanical shops, which is why servo and drive faults often go undiagnosed for longer than necessary.

Surface Finish Measurement Tools

Surface roughness (Ra values) and flatness are measurable quantities that affect how parts fit, seal, or wear. Profilometers use a contact stylus (typically 2 μm or 5 μm tip radius) to measure Ra, the arithmetic mean roughness value.

To ensure accurate Ra readings, ISO 4288 mandates specific cutoff wavelengths based on expected surface roughness:

| Expected Ra Range (μm) | Cutoff Wavelength (mm) | Evaluation Length (mm) |

|---|---|---|

| 0.02 to 0.1 | 0.25 | 1.25 |

| 0.1 to 2.0 | 0.8 | 4.0 |

| 2.0 to 10.0 | 2.5 | 12.5 |

| 10.0 to 80.0 | 8.0 | 40.0 |

Using an incorrect filter (e.g., 0.8 mm cutoff on a highly rough surface) filters out critical profile data, leading to falsely accepted parts and supplier disputes.

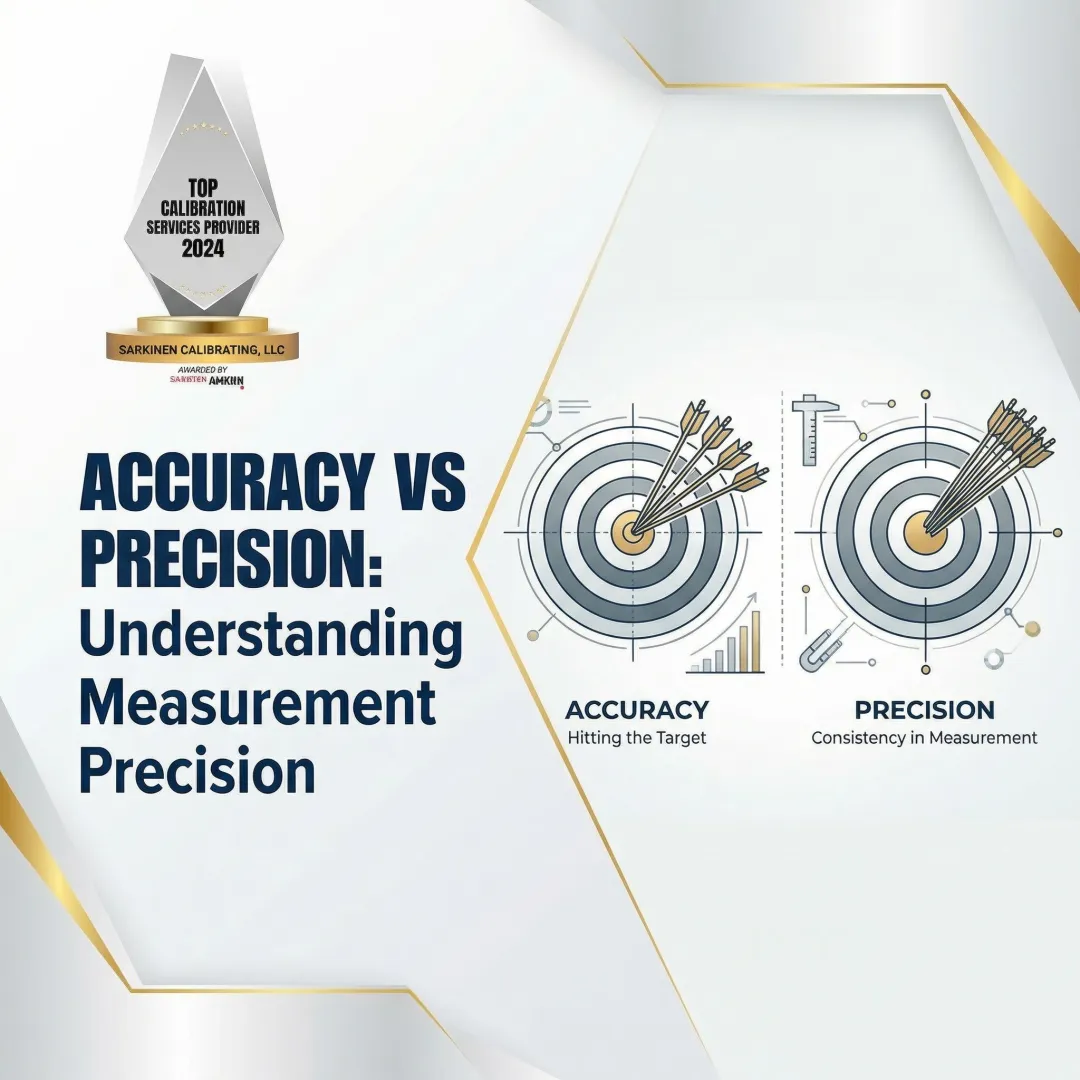

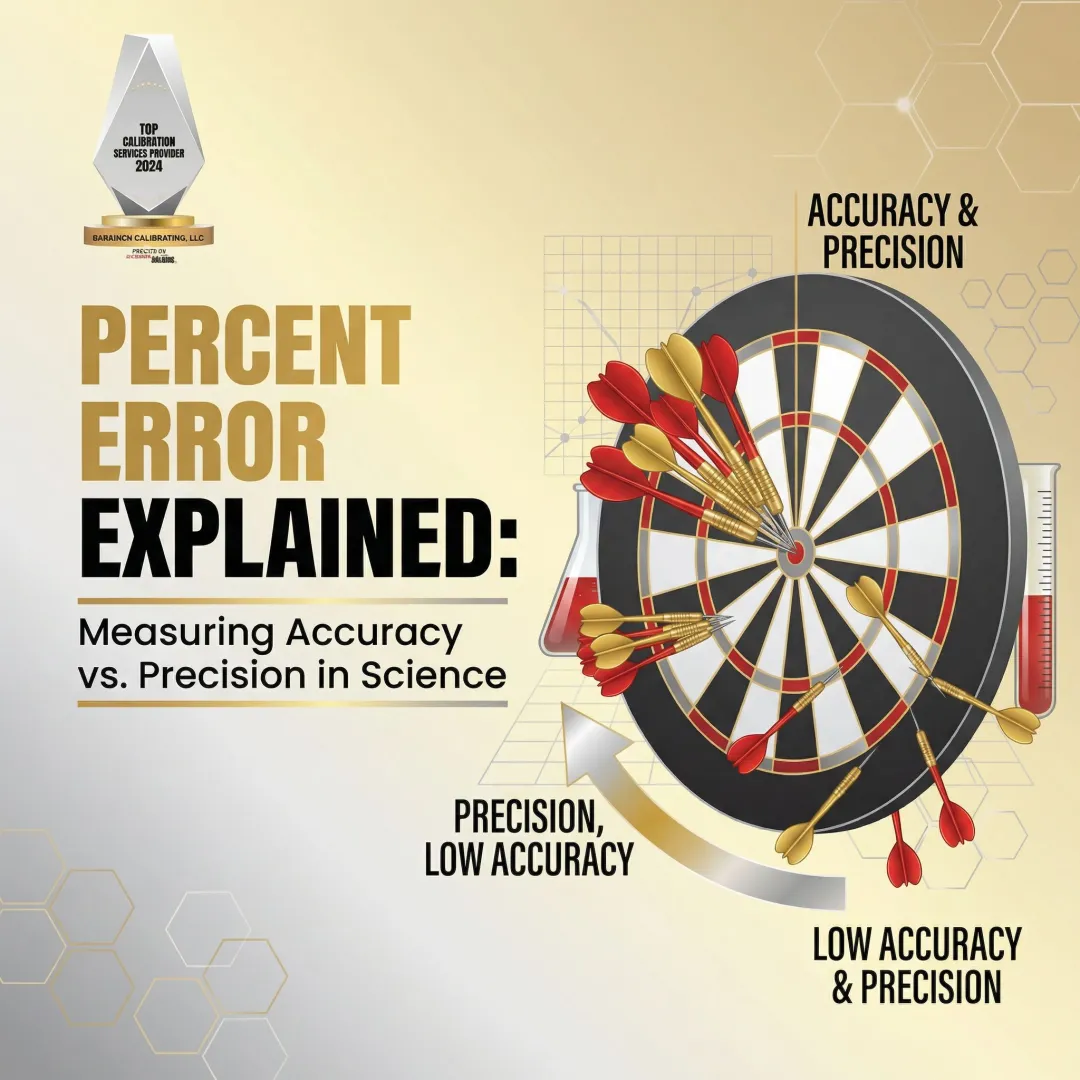

Accuracy vs. Precision: Why the Difference Matters on the Shop Floor

Measurement precision is the closeness of agreement between independent test results obtained under identical conditions—it relates to random error and dispersion. Measurement accuracy is the closeness of agreement between a measured value and the true reference value—it combines precision with trueness, meaning no systematic bias.

A Concrete Example

A dial caliper that consistently reads 0.003" high is precise (repeatable) but not accurate (biased). One that reads randomly around the true value is neither precise nor accurate. A properly calibrated instrument that produces tight, repeatable readings centered on the true value is both.

Why Precision Without Accuracy Is Dangerous

A worn or uncalibrated instrument may give repeatable but systematically wrong readings. This is more dangerous in production than random variation because operators trust the consistency without questioning the offset. Every part gets machined to the wrong dimension with perfect repeatability.

A documented NAVAIR case study shows exactly how this plays out. A microscopic chip on a calibration proving ring's vibrating reed introduced systematic bias into a master calibration standard.

The result: 710 miscalibrated torque tools across the fleet, requiring immediate recall and recalibration of 254 critical tools. Wrenches that felt and read perfectly normal could have caused catastrophic structural failures.

Both Properties Are Required Simultaneously

That case study illustrates the core rule: reliable quality control requires instruments that are simultaneously precise and accurate. When one property is missing, the consequences differ but the outcome is equally damaging:

- Precise but inaccurate — every part is consistently wrong; the shop produces scrap with confidence

- Accurate but imprecise — readings scatter around the true value, making pass/fail decisions unreliable

- Both present — repeatable results centered on the true value, which is the only foundation for trusted production

Regular calibration against a traceable standard is how shops confirm both properties hold — not just on delivery, but over the machine's working life.

How Calibration Keeps Your Test and Measurement Equipment Reliable

Calibration is the process of comparing a measurement instrument against a known reference standard and determining any deviation, along with associated measurement uncertainties. It closes the loop between what an instrument reads and what is actually true.

Without periodic calibration, even high-quality instruments drift over time due to wear, temperature changes, and mechanical stress. Drift is inevitable. The real risk is not detecting it before it causes defects.

NIST Traceability: The Unbroken Chain

Measurements must be traceable through an unbroken chain of comparisons back to national or international standards to be defensible in quality audits, customer contracts, or regulatory inspections.

Metrological traceability is a property of the measurement result (not the instrument itself), requiring documented calibration certificates that explicitly state measurement uncertainty and identify the specific SI-traceable reference standards used.

Sarkinen Calibrating provides NIST-traceable calibration services for manufacturing facilities in Portland OR and SW Washington. Their measurements reach 1.0 parts per million accuracy, well beyond the 10:1 ratio required for proper calibration, so measurement uncertainty itself never masks real machine performance issues.

TAR vs. TUR: Why the Old Standard Is Obsolete

For decades, MIL-STD-120 mandated a 10:1 or 4:1 Test Accuracy Ratio (TAR) — the ratio of the unit under test tolerance to the published accuracy of the reference standard. The problem: TAR ignores environmental factors, operator error, and instrument resolution.

Modern ISO/IEC 17025 standards require the Test Uncertainty Ratio (TUR) instead. TUR accounts for the full measurement process: reference standard uncertainty, environmental influences, repeatability, resolution, and operator error. A TUR ≥ 4:1 is the current standard for conformity assessment. Relying on TAR alone exposes manufacturers to hidden false-accept risks.

The Cost of Skipping Calibration

An out-of-calibration instrument creates downstream problems that compound quickly:

- Scrap and rework from parts cut to the wrong specification

- Warranty claims when defective product reaches customers

- Failed audits that jeopardize customer contracts

Frame calibration not as a maintenance cost but as production insurance that eliminates unexpected downtime. The alternative—discovering calibration problems after shipping defective parts—costs far more than any calibration program.

Frequently Asked Questions

What is test and measurement?

Test and measurement is the use of instruments and standardized procedures to evaluate whether a physical property, component, or system meets a defined specification. It underpins quality control in manufacturing and engineering by providing objective data for accept/reject decisions.

What are the objectives of test and measurement?

The core objectives are:

- Verifying part conformance to specifications

- Detecting process drift before it produces defects

- Ensuring equipment performs to spec

- Preventing defective output from reaching customers

- Providing documented evidence of quality for auditors

What is an example of a test and measurement?

Using a micrometer to measure the diameter of a machined shaft and comparing it against the engineering drawing tolerance (e.g., 1.250" ±0.002") to determine pass or fail. The micrometer provides the measurement; the tolerance comparison is the test.

What are the four types of measurement?

The four scales are nominal (categories with no order), ordinal (ranked categories without equal intervals), interval (equal intervals but no true zero), and ratio (equal intervals with a true zero). Ratio measurement—dimensions in millimeters, pressure in PSI—is the most common and analytically useful in precision manufacturing.

What is the difference between accuracy and precision in measurement?

Accuracy describes how close a reading is to the true value; precision describes how consistently the same reading is produced under repeated measurements. Reliable test and measurement requires both at the same time—a tool that is precise but not accurate will consistently produce wrong results.

How often should test and measurement equipment be calibrated?

Calibration frequency depends on instrument type, usage intensity, and industry requirements. Most production environments establish scheduled intervals (often annually for micrometers and calipers, more frequently for critical tools) and recalibrate after any impact, repair, or suspected drift.