Introduction

Confusing accuracy and precision leads to real production losses—yet in everyday conversation, the two words are used interchangeably. In measurement science and manufacturing, they describe fundamentally different properties.

A CNC machine can produce parts that cluster tightly around the same dimension every single time, appearing perfectly stable on a control chart, yet every part is out of specification. That's high precision with low accuracy—one of the most dangerous conditions in quality control because the process looks stable while consistently producing scrap.

For any operation that depends on repeatable, reliable output, understanding both concepts—and how they interact—is non-negotiable. This article breaks down each in practical terms, covers the four combinations every manufacturer should recognize, and shows how calibration against NIST-traceable standards restores both when they drift.

TL;DR

- Accuracy measures how close a result is to the true value; precision measures how consistently repeated measurements agree with each other

- A system can be accurate without being precise, precise without being accurate, both, or neither—and each combination points to a different root cause

- Precision breaks into two components: repeatability (same setup, short timeframe) and reproducibility (across operators, instruments, or time)

- Systematic errors drive inaccuracy; random errors drive imprecision—identifying which determines the correct fix

- NIST-traceable calibration verifies and restores both accuracy and precision

Accuracy and Precision Defined: What Each Term Actually Means

What Accuracy Really Means

Accuracy is the degree of closeness between a measured value (or the mean of multiple measurements) and the true or accepted reference value. In simple terms, accuracy answers the question: How correct is this measurement?

If you measure a part specified at 25.000 mm and your instrument consistently reads 25.012 mm, your measurements are inaccurate by 0.012 mm, regardless of how consistent those readings are.

What Precision Really Means

Precision is the degree to which repeated measurements, taken under the same conditions, agree with each other (not with the true value, but with each other). Precision measures consistency, not correctness.

If you measure the same 25.000 mm feature five times and get 25.012 mm, 25.013 mm, 25.011 mm, 25.012 mm, and 25.013 mm, your measurements are highly precise — tightly clustered — but still inaccurate.

The ISO 5725 Framework

Under ISO 5725-1:2023, the general term "accuracy" is composed of two sub-components:

- Trueness: How close the mean of repeated measurements is to the true value (systematic error)

- Precision: How tightly clustered those repeated measurements are (random error)

In common technical usage, "accuracy" often refers only to the trueness component. The International Vocabulary of Metrology (VIM 3rd Edition) strictly separates these concepts to avoid ambiguity.

Error Types and Their Impact

Systematic errors affect accuracy/trueness. These are consistent offsets in one direction:

- A caliper with a bent frame that always reads 0.05 mm high

- A CMM probe with undetected physical wear

- A temperature sensor reading 2°C above actual temperature

Random errors affect precision. These produce unpredictable scatter around a mean:

- Varying operator clamping force on a micrometer

- Electrical noise in a digital readout

- Vibration during measurement

Both can coexist. A measurement system can carry a systematic offset (poor accuracy) and high random scatter (poor precision) at the same time.

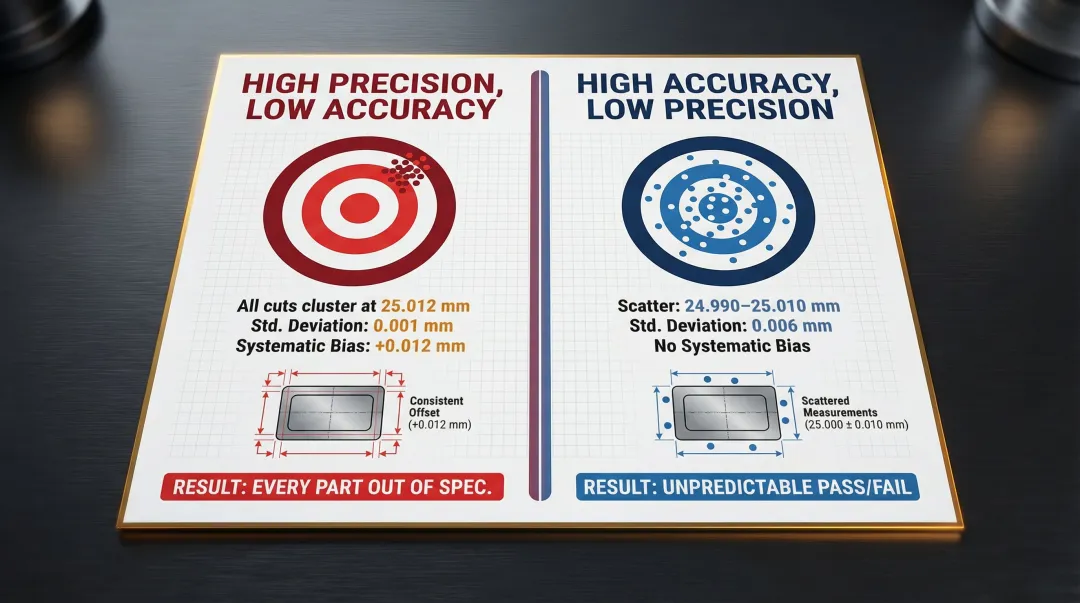

Manufacturing Example: CNC Cutting Accuracy vs. Precision

Consider a CNC machine cutting a part to a nominal dimension of 25.000 mm. Two common failure modes look very different on the shop floor:

| Scenario 1: High Precision, Low Accuracy | Scenario 2: High Accuracy, Low Precision | |

|---|---|---|

| Cut values | All cluster at 25.012 mm | Scatter between 24.990–25.010 mm |

| Mean | 25.012 mm | 25.000 mm |

| Std. deviation | 0.001 mm (excellent) | 0.006 mm (poor) |

| Systematic bias | +0.012 mm | None |

| Result | Every part consistently out of spec | Some parts pass, some fail — unpredictable |

Scenario 1 points to a calibration offset that needs correcting at the source. Scenario 2 suggests process instability — fixturing, tooling, or environmental factors — that no amount of recalibration alone will resolve.

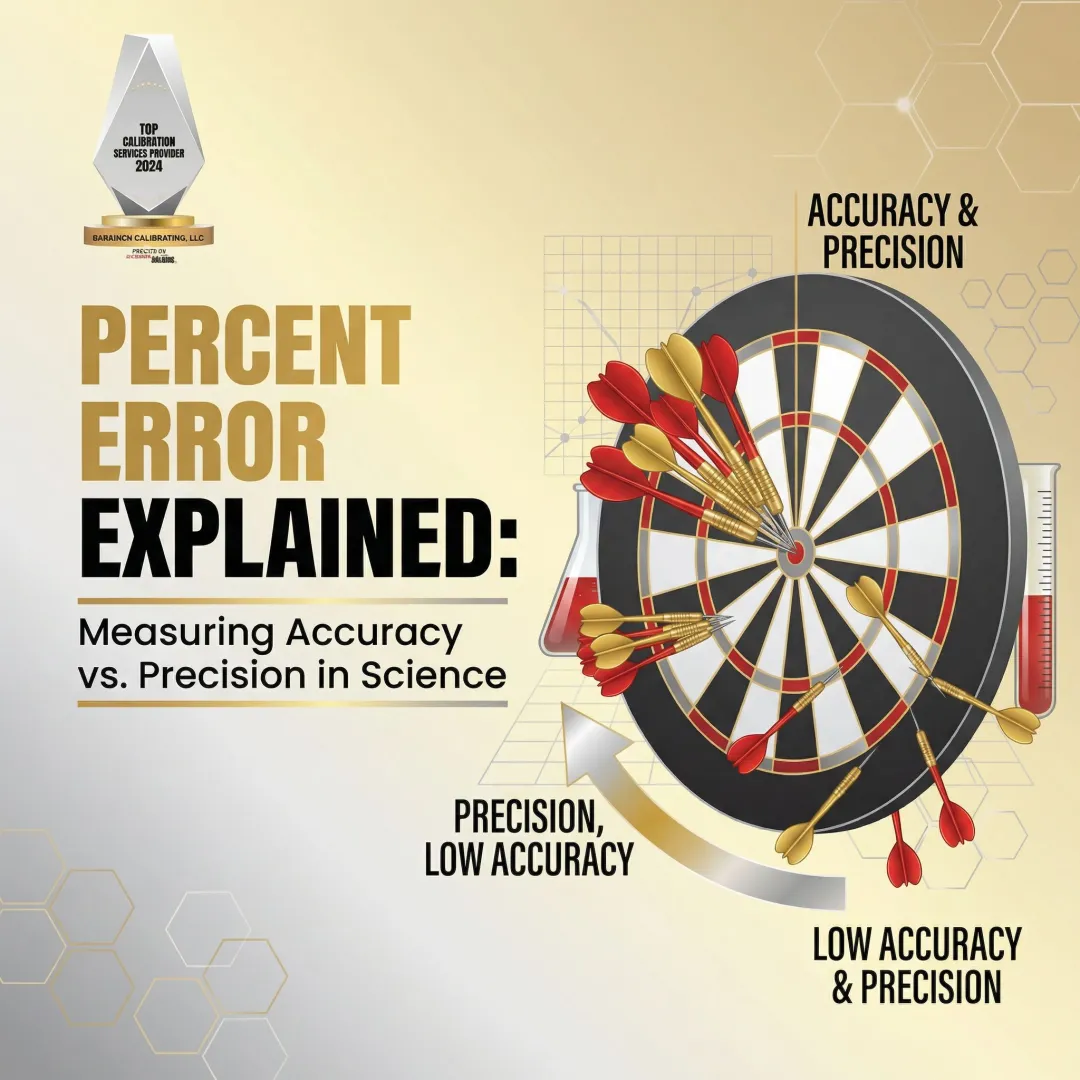

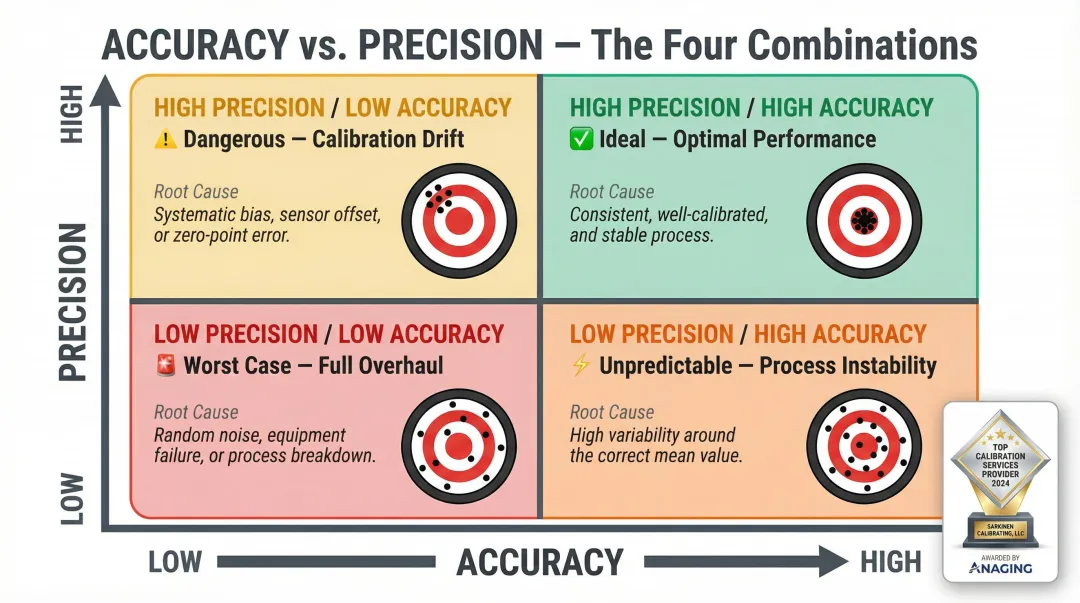

The Four Combinations Every Manufacturer Should Recognize

Each combination points to a different root cause — and a different fix.

The Four Combinations

1. High Accuracy + High Precision (Ideal)

- Measurements are close to the true value and consistent

- Process is both correct and predictable

- This is what every measurement system should achieve

2. High Precision + Low Accuracy (Dangerous)

- Measurements are tightly clustered but systematically offset from the true value

- Indicates calibration drift or systematic bias

- Most dangerous condition: Process appears stable on control charts while consistently producing out-of-spec parts

3. High Accuracy + Low Precision (Unpredictable)

- Measurements average near the true value but vary widely

- Indicates excessive random error from environmental factors, operator technique, or instrument resolution

- Process is correct on average but unreliable

4. Low Accuracy + Low Precision (Worst Case)

- Measurements are neither correct nor consistent

- Multiple error sources present

- Requires a full system overhaul

Diagnostic Value

Once you've identified which combination is present, the corrective path becomes clear:

Precision problems typically point to:

- Insufficient instrument resolution

- Inconsistent operator technique

- Environmental noise (vibration, temperature fluctuations)

- Mechanical wear causing random variation

Accuracy problems typically point to:

- Calibration drift

- Systematic bias in the measurement method

- Zero-point error

- Uncorrected thermal expansion

The Hidden Danger of High Precision Without Accuracy

In production quality control, high precision without accuracy is particularly dangerous. Standard Shewhart control charts focus heavily on variance (precision) but are blind to systematic displacement (trueness). A highly precise process shows tight control limits and appears stable — while output runs consistently out of tolerance. This condition can go undetected without independent checks against traceable reference standards.

Key Properties That Govern Measurement System Quality

Five properties define how well a measurement system performs. Whether you're designing a measurement strategy or interpreting gage study results, these are the concepts you need.

Repeatability

Repeatability is the variation in measurements obtained when one operator uses the same instrument on the same characteristic multiple times under identical conditions.

Repeatability is the instrument's contribution to precision under controlled conditions. It answers: Does this tool give me the same reading every time I measure the same thing?

For example: an operator measures a gage block 10 times in 5 minutes. If readings vary by ±0.002 mm, the repeatability (standard deviation) is approximately 0.001 mm.

Reproducibility

Reproducibility is the variation in measurements when different operators, different instruments, or different time periods are involved in measuring the same characteristic.

Reproducibility captures system-level sources of variation beyond a single instrument. It answers: Do different people or different tools get the same result?

Consider three operators each measuring the same part five times. If Operator A averages 25.010 mm, Operator B averages 25.015 mm, and Operator C averages 25.012 mm, reproducibility is the spread between those operator averages.

Bias

Bias is the systematic difference between the average of a set of measurements and the true (reference) value — the measurable component of inaccuracy.

Once identified through calibration, bias can be corrected through adjustment or offset correction. A digital caliper that consistently reads 0.05 mm higher than a traceable gage block has a bias of +0.05 mm.

Stability

Stability describes how a measurement system's bias or precision changes over time. An unstable system drifts — results that were accurate at last calibration may not be after months of use, thermal cycling, or mechanical wear.

NIST guidance recommends monitoring measurements on a check standard over time to control bias and long-term variability. For most precision equipment, annual calibration is standard practice; high-use or critical instruments typically warrant quarterly or semi-annual verification.

Measurement Uncertainty

Measurement uncertainty is a quantitative statement of the range within which the true value is believed to lie, given the measurement result.

All measurements carry inherent uncertainty influenced by:

- Instrument resolution

- Environmental conditions (temperature, humidity, vibration)

- Operator technique

- Reference standard quality

Uncertainty is expressed as a ± value (e.g., 25.000 mm ± 0.003 mm) and must be considered when evaluating whether a measurement falls within specification. The JCGM 100:2008 Guide to the Expression of Uncertainty in Measurement (GUM) provides the mathematical framework for quantifying and reporting measurement uncertainty.

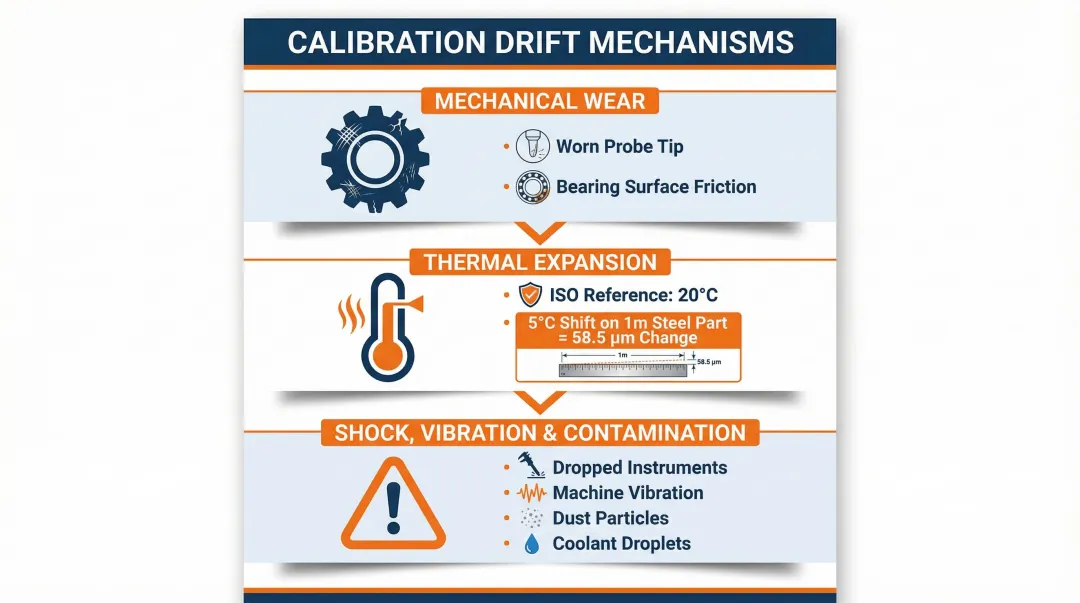

What Causes Measurement Accuracy and Precision to Break Down

Measurement systems are not static. Accuracy and precision degrade over time through predictable mechanisms.

Equipment-Related Degradation

Three equipment-level mechanisms drive calibration drift:

- Mechanical wear — Probe tips wear down, bearing surfaces develop play, and contact points lose their geometry. A tool accurate at its last calibration will drift as these factors accumulate, producing readings that appear valid but are systematically offset from the true value.

- Thermal expansion — ISO 1:2022 sets the standard reference temperature for dimensional measurement at exactly 20°C. When ambient temperature deviates from this, materials expand or contract predictably.

- Shock, vibration, and contamination — Dropped instruments, machine vibration during use, and dust or coolant contamination all shift calibration over time.

The thermal effect deserves specific attention. For plain carbon steel (A36, 1020), the coefficient of thermal expansion (CTE) is approximately 11.7 × 10⁻⁶ /°C. A 5°C shift on a 1-meter part produces 58.5 µm of dimensional change — enough to consume tight manufacturing tolerances entirely.

Operator and Environmental Factors

Equipment condition is only part of the picture. Operator technique and facility environment introduce their own sources of error:

- Technique variation — Inconsistent measurement force, parallax errors, and varying contact points degrade precision even when the instrument is undamaged.

- Facility environment — Temperature swings, humidity changes, and vibration from nearby equipment all contribute to measurement uncertainty.

Research on machine tool thermal errors shows heat-induced errors account for 40% to 70% of total machining error. The same thermal effects act on measurement instruments sitting in the same environment.

The Risk of "Set and Forget" Calibration

Many facilities treat a calibration certificate as permanent proof of accuracy, but instruments drift between calibration events. ISO/IEC 17025:2017 Clause 6.4.7 requires calibration programs to be reviewed and adjusted based on historical performance. Fixed annual intervals either over-calibrate stable instruments or under-calibrate drifting instruments.

Operating tools beyond their calibration interval without verification increases the risk of out-of-tolerance (OOT) events. When an instrument is found OOT, ISO/IEC 17025 Clause 7.10 requires a reverse-traceability impact analysis — examining all previous results to determine whether nonconforming work reached customers.

That regulatory requirement turns extended, unverified calibration intervals into a direct financial and liability risk.

How Measurement Systems Are Specified, Validated, and Calibrated

Formal Documentation and Specifications

Measurement systems are formally documented through specification sheets, engineering drawings, and quality standards that define the rated accuracy and precision of an instrument. Two values matter here:

- Rated values: Manufacturer-stated performance under ideal laboratory conditions

- Tested/verified values: Demonstrated performance under actual operating conditions, typically through Gage R&R studies

The 10:1 Accuracy Ratio Rule

The standard practice in metrology requires that the measuring instrument be at least ten times more accurate than the tolerance being inspected. This ensures that instrument uncertainty does not inflate the uncertainty of pass/fail decisions.

Example: If you're inspecting a part with a ±0.010 mm tolerance, your measurement system should have an uncertainty no greater than 0.001 mm (10:1 ratio).

Modern standards have evolved beyond fixed ratios. ASME B89.1.13-2013 and ISO/IEC 17025 now use Test Uncertainty Ratios (TUR), which explicitly consider documented measurement uncertainty and apply decision rules to control the Probability of False Accept (PFA). Well-calibrated systems often target ratios well beyond 10:1 for tighter process control.

NIST Traceability

A measurement qualifies as traceable when it links through an unbroken chain of comparisons to national or international measurement standards maintained by bodies such as the National Institute of Standards and Technology (NIST).

A "NIST" logo on a certificate does not automatically guarantee metrological traceability. Per VIM 2.41, traceability requires:

- A documented, unbroken chain of calibrations

- Each calibration contributing to the measurement uncertainty

- Explicit statement of the reference standards used

- Documented measurement uncertainty values

Without these elements, "accuracy" claims lack a verifiable basis.

The Calibration Process

Establishing traceability requires a rigorous calibration process — one that serves both as verification and correction:

- Verification: Compare the instrument's output against a traceable reference standard

- Documentation: Record any deviation (bias) found

- Correction: Either adjust the instrument or record the correction factor for future use

For manufacturing operations in Portland, OR and SW Washington, Sarkinen Calibrating delivers NIST-traceable calibration at 1.0 parts per million accuracy — well past the 10:1 minimum ratio. That level of precision gives facilities a clear, documented record that their measurement systems are performing as required, which holds up under both internal audits and customer scrutiny.

Conclusion

Accuracy and precision are distinct, measurable properties that together define whether a measurement system can be trusted. Accuracy without precision produces inconsistent results; precision without accuracy produces consistent errors. Both must be actively monitored and maintained.

Measurement systems are not static. Drift, wear, and environmental exposure degrade both accuracy and precision over time, making regular validation against traceable standards an operational necessity rather than an optional quality formality. Facilities that act on this through scheduled calibration and verification control their quality. Those that don't typically discover problems only after they've reached the customer.

The concepts covered here give you the vocabulary to ask better questions about your equipment — and the context to act on the answers before a measurement error becomes a production problem.

Frequently Asked Questions

What is the meaning of precision of measurement?

Precision is the degree to which repeated measurements under the same conditions agree with each other—not necessarily with the true value. It quantifies consistency and is measured through repeatability (same operator, instrument, short time) and reproducibility (different operators, instruments, or time periods).

How do you measure precision?

Precision is measured by taking multiple readings of the same quantity under controlled conditions and calculating the spread (standard deviation or range) of those results. A smaller spread indicates higher precision. This is typically done through formal Gage R&R studies.

What is the difference between precision and measurement?

Measurement is the act or result of measuring a physical property. Precision describes how consistent or repeatable those results are—one of several criteria, alongside accuracy, stability, and uncertainty, used to evaluate measurement quality.

Can a measurement be precise but not accurate?

Yes—a system can produce tightly clustered, consistent results that are all offset from the true value due to systematic bias. This is one of the most common and consequential conditions in manufacturing measurement because the process appears stable while consistently producing out-of-spec parts.

What causes measurement inaccuracy in manufacturing equipment?

Common causes include calibration drift from mechanical wear or thermal cycling, zero-point offset, systematic bias in the measurement method, and instruments used beyond their calibration interval. Environmental factors—particularly temperature deviation from the 20°C ISO reference standard—also contribute significantly.

How does calibration improve measurement accuracy and precision?

Calibration compares an instrument's readings against a traceable reference standard, identifies bias or drift, and either corrects the instrument or documents the correction factor. This restores confidence in accuracy and establishes a baseline for monitoring precision over time.