Introduction

A CNC machine in your shop cuts parts to the same dimensions every single time—except they're all 0.003 inches short of the target. Is this an accuracy problem or a precision problem? The answer determines whether you need calibration or process improvement, and getting it wrong can cost thousands in scrap and downtime.

Many manufacturers struggle to distinguish accuracy from precision, chasing the wrong fix and paying for it. According to industry research, ineffective calibration programs cost manufacturers an average of $1.73 million annually, with unexpected production downtime reaching $22,000 per minute in sectors like automotive.

Knowing which problem you're actually dealing with is the first step to solving it — and protecting your production floor from costs that compound fast.

TLDR

- Accuracy measures how close a measurement is to the true value; precision measures how consistently you reproduce that measurement

- A machine can be precise without being accurate (consistent but wrong), or accurate without being precise (correct on average but unpredictable)

- Poor accuracy points to a calibration or systematic error; poor precision suggests process instability or equipment wear

- NIST-traceable calibration catches both types of error before they turn into scrapped parts or failed warranty claims

Accuracy vs. Precision: What's the Difference?

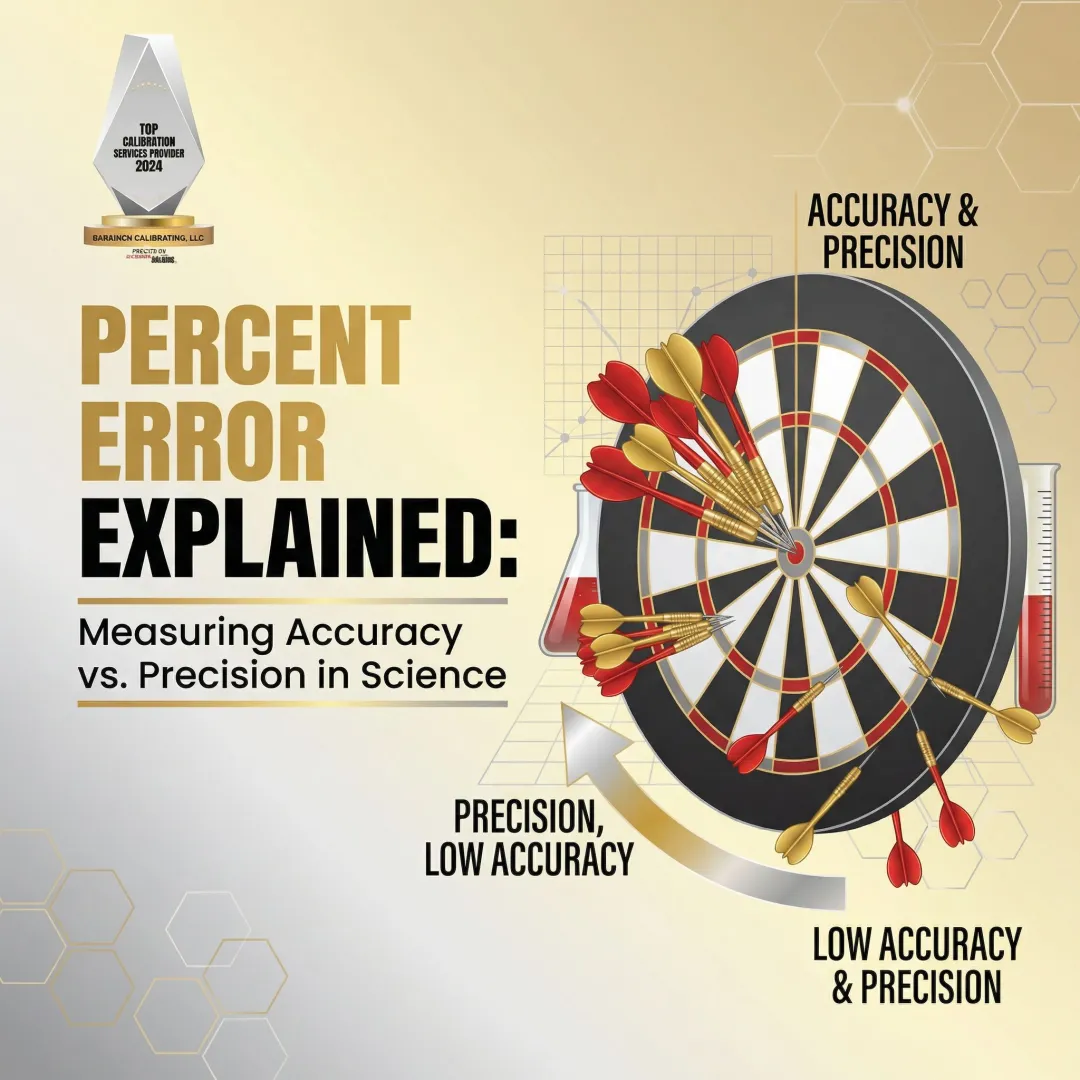

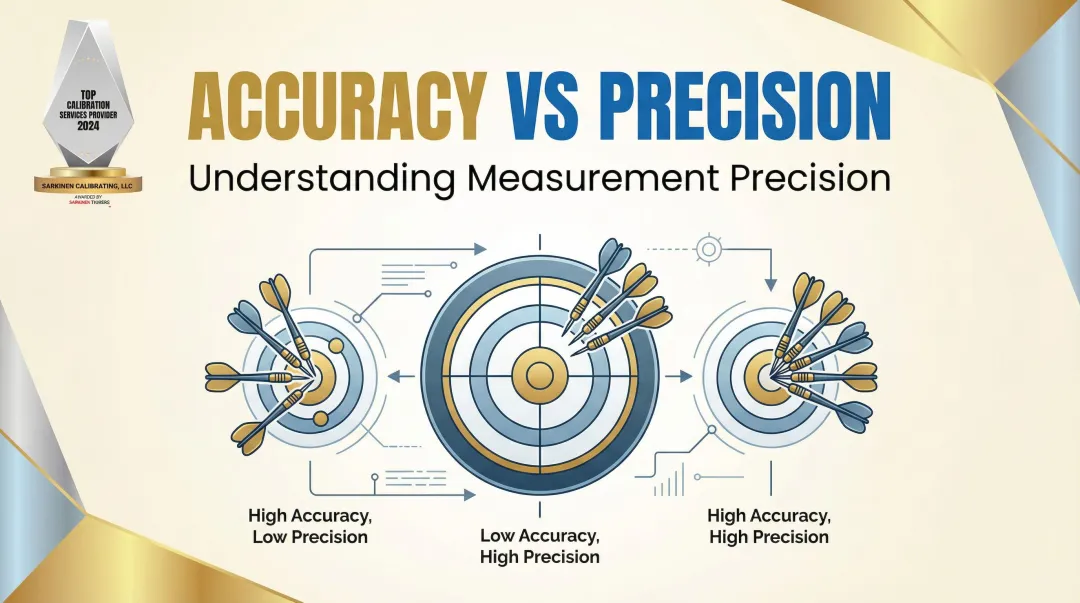

Picture a dartboard: accuracy describes where your darts land relative to the bullseye (the true value), while precision describes how tightly clustered your darts are with each other — regardless of where they land.

Defining Accuracy

Accuracy is the closeness of a measured value to the true or accepted reference value. In manufacturing, the "true value" may be a design specification, a tolerance standard, or a NIST-traceable reference measurement. Accuracy is affected by systematic errors: consistent, directional biases that push all measurements off in the same direction.

A milling machine that always cuts 0.003 inches short of the target dimension is inaccurate, even if every cut is identical. The error is real, repeatable, and fixable — but only if it's identified as an accuracy issue.

Defining Precision

Precision is the consistency of measurements — how reliably an instrument or process delivers the same result under the same conditions. Critically, precision is independent of the true value. A highly precise instrument can still be inaccurate if it's systematically off.

Metrology standards break precision into two distinct components:

- Repeatability: Same instrument, same operator, same conditions in a short time period

- Reproducibility: Same measurement process across different operators, shifts, or environments

Standard deviation quantifies precision — a smaller standard deviation means tighter, more consistent measurements.

Example: A torque wrench that reads 45 ft-lbs every single time is precise. If the true torque being applied is actually 47 ft-lbs, the wrench is precise but not accurate.

The Four Possible Combinations of Accuracy and Precision

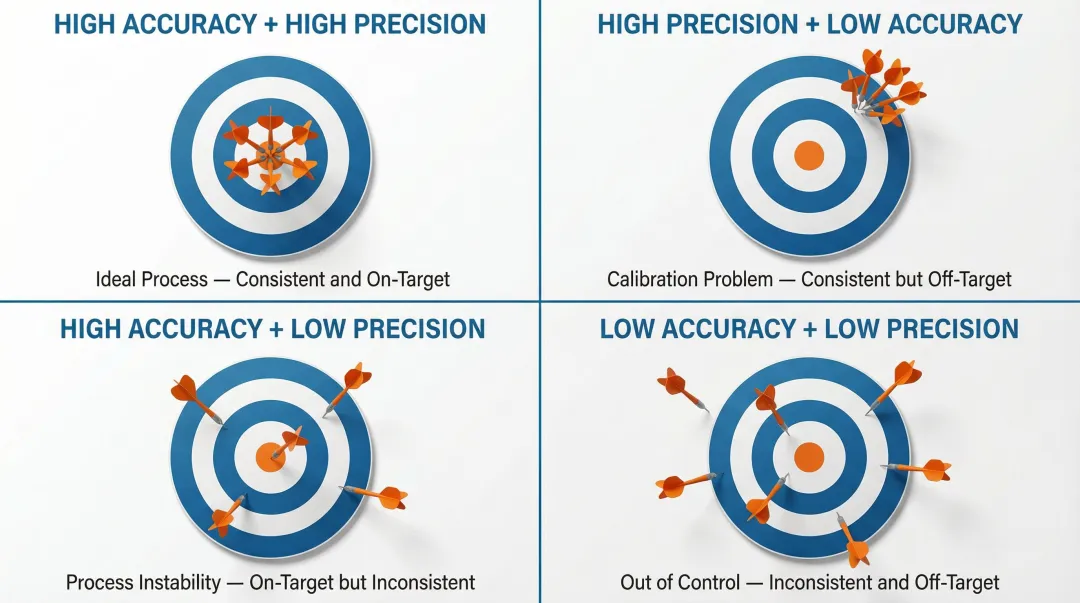

Using the dartboard framework, manufacturers can encounter four outcome scenarios:

1. High accuracy, high precisionMeasurements cluster tightly around the true value—the ideal outcome. Your process is both correct and consistent.

2. High precision, low accuracyConsistent measurements, but systematically off—a calibration problem. This is the most dangerous scenario in manufacturing because it creates false confidence: equipment producing repeatable but out-of-spec parts can pass internal checks while delivering defective products to customers.

3. High accuracy, low precisionMeasurements average out to the correct value but are scattered—a process stability problem. Your machine hits the target on average, but unpredictable variation makes quality control impossible.

4. Low accuracy, low precisionMeasurements are scattered and wrong—the most costly and difficult scenario to diagnose. Both calibration and process improvement are needed.

Knowing which combination you're dealing with points directly to the right fix. Calibration corrects systematic error — the consistent offset that shifts all your measurements in the same direction. Process improvement or equipment maintenance targets random variation, the unpredictable scatter that calibration alone can't solve. Misidentifying one for the other wastes time and leaves the real problem untouched.

Common Errors That Affect Accuracy and Precision

Systematic errors affect accuracy. These are consistent, predictable biases introduced by a faulty instrument, improper setup, environmental factors (temperature, vibration), or wear and drift over time. Because systematic errors shift all measurements in the same direction, they're often invisible during normal operation—the machine appears to be working fine, but the output is consistently wrong.

Temperature variation is a prime example: standard steel expands at approximately 11.5 ppm/°C, meaning even a minor thermal shift can consume an entire part tolerance.

Random errors affect precision. These arise from unpredictable variation—slight differences in material, operator technique, environmental fluctuation, or measurement resolution. Random errors scatter measurements, reducing reproducibility.

Unlike systematic errors, they cannot be fully eliminated, but they can be minimized by:

- Improving measurement resolution

- Taking multiple readings

- Averaging results across readings

Both error types can coexist: a machine can have a systematic offset (accuracy problem) and also exhibit significant measurement scatter (precision problem). Identifying which type dominates determines whether you need recalibration, a setup correction, or a closer look at your process controls.

Why Both Accuracy and Precision Matter in Manufacturing

When accuracy or precision breaks down, production pays for it. The costs show up in a few different ways:

- Scrap and rework from systematic errors — A dimensional shift of even a few thousandths of an inch, left uncorrected in a high-volume CNC run, can invalidate an entire batch before anyone catches it

- Lost process control from unpredictable measurements — When readings vary inconsistently, operators can't set reliable offsets, tolerances stack unpredictably in assemblies, and quality shifts from prevention to inspection

- Unexpected downtime — Unpredictable equipment is one of the most disruptive and avoidable causes of production stoppages

- Customer and audit exposure — Manufacturers need to prove their machines deliver as promised, consistently. Documented, traceable measurements support quality certifications and give customers concrete proof of compliance

Beyond production losses, there's a timeline problem. A machine that's accurate and precise on day one will drift over time due to wear, thermal cycling, and mechanical stress. Periodic calibration isn't a housekeeping task — it's how shops maintain the output quality they were producing when the machine was new.

That's the perspective Sarkinen Calibrating's founder Larry brought when he built the service: starting as a machine operator, he understood that calibration is ultimately about production efficiency, not equipment maintenance for its own sake. New machines, too, should be verified against their promised specifications while still under warranty — calibration data is the documented proof needed to hold manufacturers accountable if a machine doesn't meet spec from day one.

How Calibration Maintains Accuracy and Precision in Your Equipment

Professional calibration compares an instrument's measurements against a reference standard of known, higher accuracy. The 10:1 ratio rule states that the reference standard used in calibration should be at least 10 times more accurate than the device being calibrated. Sarkinen Calibrating provides measurements well beyond this 10:1 ratio, with all measurements traceable to NIST and/or International Standards.

NIST traceability means every measurement in a calibration chain links back to a national or international standard, providing documented proof that accuracy has been verified. This is the foundation of any quality management system that depends on measurement integrity.

During calibration:

- The technician identifies the magnitude and direction of any systematic error (accuracy deviation)

- Adjusts or documents the correction

- Verifies that the instrument's repeatability (precision) is within acceptable limits

- Creates the "before and after" record that proves a machine meets spec

That process translates directly into measurable results for your operation.

Business outcomes of regular calibration:

- Predictable operation with minimal unexpected downtime

- Early identification of drift or damage before it becomes a production problem

- Ability to prove machine performance to customers

- Proactive quality management rather than reactive repair

Sarkinen Calibrating serves manufacturing facilities across Portland OR and SW Washington, with local response times that keep equipment running and production on schedule.

Frequently Asked Questions

What is the difference between accuracy and precision in calibration?

Accuracy is how close a measurement is to a known reference value; precision is how consistently an instrument repeats that measurement. Calibration corrects accuracy by comparing against a traceable standard, while precision is evaluated through repeatability testing across multiple readings.

What are the 4 principles of accurate measurement?

Four core principles support accurate measurement:

- Use a calibrated reference standard

- Minimize systematic and random errors

- Ensure traceability to a recognized standard (such as NIST)

- Verify repeatability across multiple measurements under consistent conditions

What are the four types of calibration?

The four main types are electrical, mechanical (including dimensional and force), temperature, and pressure calibration. CNC and precision manufacturing equipment typically falls under mechanical/dimensional calibration.

What level of measurement is the most precise?

The highest levels of precision are achieved using instruments calibrated against primary reference standards with traceability to NIST or international equivalents. In dimensional measurement for manufacturing, precision can reach the sub-micron level; Sarkinen Calibrating offers accuracy to 1.0 parts per million—or one millionth of an inch per inch.

Is cm or inches more precise?

The unit of measurement (centimeters or inches) does not inherently determine precision—precision depends on the instrument used and its resolution. In manufacturing, both metric and imperial systems can achieve the same level of precision when using appropriately calibrated, high-resolution instruments.