Introduction

Even a tiny measurement drift in precision equipment can cascade into defective products, costly rework, and unexpected downtime. For manufacturing and machine shop operators, machine validation isn't optional—it's essential. A CNC machine that's slightly out of calibration doesn't just produce one bad part; it systematically skews every part in the interval, potentially resulting in scrap, customer returns, or warranty claims far exceeding the cost of regular calibration.

This guide covers what precision testing machines are, how electromechanical validation works, what standards govern accuracy, and what to look for in a calibration service.

Whether you're verifying a new machine's specs under warranty or maintaining aging equipment, understanding these fundamentals helps you avoid the compounding failures that calibration drift causes.

TLDR:

- Precision testing machines verify material properties and equipment accuracy through traceable measurement systems

- Electromechanical systems offer superior low-speed control and repeatability for loads under 300 kN

- NIST traceability provides auditable measurement chains required for compliance and customer proof

- Uncalibrated machines create compounding quality failures that far exceed calibration costs

- Local calibration providers deliver faster response times and shorter downtime windows

What Are Precision Testing Machines?

Precision testing machines are instruments and systems used to measure, verify, or validate the mechanical, electrical, or dimensional properties of materials, parts, or equipment. What distinguishes them from general-purpose tools is their traceability to recognized standards and their measurement resolution—typically capable of detecting deviations at the micrometer or nanometer level.

Major Categories in Manufacturing Environments

Each category serves a distinct function in quality control and machine verification:

- Electromechanical UTMs apply controlled force to determine tensile strength, compression, elongation, and modulus—using servo motors and ball screws with closed-loop encoder feedback

- Coordinate Measuring Machines (CMMs) verify machined part dimensions against CAD models via tactile and optical probing; high-end systems reach accuracy below one micron

- Dimensional gauging systems (laser interferometers, telescoping ballbars) measure CNC machine tool accuracy and diagnose positioning errors, backlash, and geometric drift before they cause defects

- Torque and force testers confirm that assembly tools register accurate torque, preventing fastener failures from over- or under-tightening

- Rotary axis calibration equipment measures angular positioning accuracy and alignment on multi-axis machining centers, surfacing mechanical wear, setup errors, and encoder issues

Precision Testing vs. Precision Measurement

There's an important distinction between these two categories that shapes every equipment decision. A precision testing machine evaluates a material or product's properties—a UTM determining weld integrity, for example. A precision measurement instrument verifies that a machine or process stays within spec, like a laser interferometer validating CNC axis travel.

The practical question: are you testing what you made, or verifying the equipment that made it? Getting that answer right determines which tool belongs on your floor.

Industries That Depend on Precision Testing

Precision testing spans nearly every sector that touches manufactured goods:

- Aerospace — CMMs verified to ISO 10360 inspect turbine blades and fuselage structures; a measurement error in a structural component can initiate fatigue cracks

- Automotive — servohydraulic systems simulate crash conditions; torque verification systems on assembly lines prevent fasteners from backing off in service

- CNC machining and fabrication — ballbar testing and laser interferometry confirm that machine tools hold tight tolerances; many dimensional defects trace back to undetected positioning errors, not bad tooling

- Construction materials — compression testers verify concrete cylinder strength per ASTM C39, satisfying building code and safety requirements

- Electronics — electromechanical UTMs test delicate components cleanly, without the contamination risk of hydraulic systems

- Research institutions — materials science and metrology labs require the highest-resolution instruments available

Precision Equipment in Action

Here's how these tools play out on a typical machine shop floor:

- A CMM checks a machined aerospace bracket after 5-axis milling, confirming complex geometric features match CAD specs within ±0.0005 inches before the part ships to final assembly.

- A UTM pulls a weld joint sample to failure, verifying the joint meets minimum strength requirements before production welding begins.

- A laser interferometer validates CNC axis travel on a newly installed machining center—measuring positioning accuracy across the full 40-inch X-axis to confirm the machine hits its ±0.0001-inch warranty spec.

- A ballbar test on a 20-year-old CNC router surfaces servo mismatch and backlash, letting the shop schedule repairs during planned downtime instead of losing a critical production run to an unexpected failure.

How Electromechanical Validation Works

Core Operating Principle

Electromechanical testing systems use a servo motor to drive a lead screw or ball screw mechanism, applying precise, controlled force or displacement to a test specimen. Load cells and encoders measure the response in real time, creating force-displacement curves that reveal material properties.

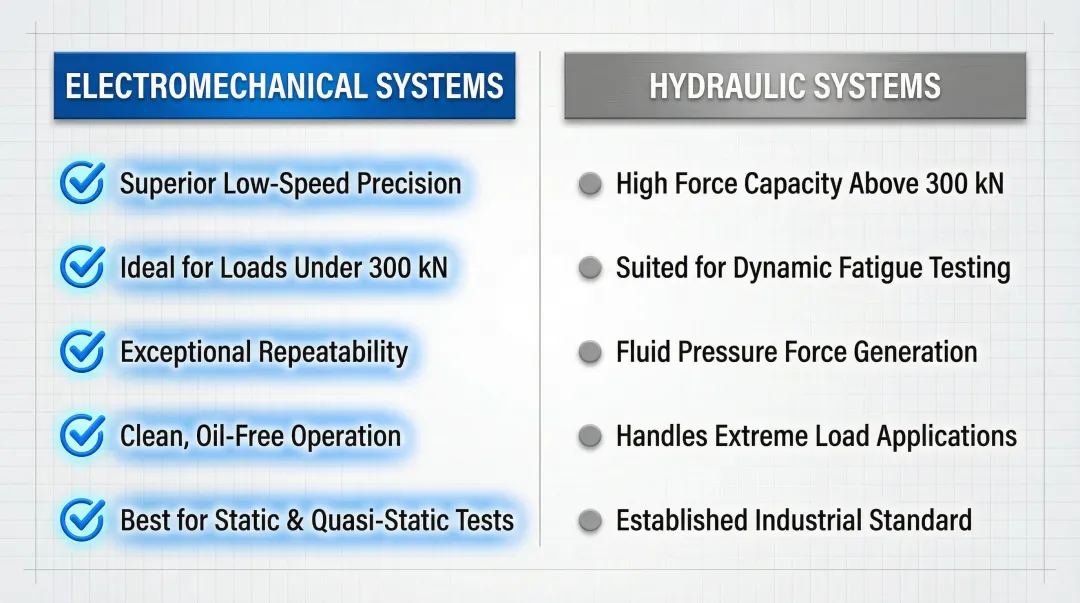

Unlike hydraulic systems that use fluid pressure to generate force, electromechanical systems offer superior precision and repeatability for static and quasi-static tests. Hydraulic systems excel at very high forces (exceeding 300 kN) and dynamic fatigue testing, but electromechanical systems deliver pin-point accuracy at low loads and exceptional low-speed positioning control. That precision is what makes them the right choice for manufacturing validation work.

Key Hardware Components

Frame/Load Frame: The structural backbone that resists applied forces without deflection, ensuring measurements reflect specimen behavior rather than machine compliance.

Servo Motor and Drive System: Converts electrical signals into precise mechanical motion, controlling crosshead speed and position with closed-loop feedback. Modern systems achieve control loop rates up to 5000 Hz for smooth, jitter-free motion.

Load Cell: Measures applied force using strain gauges in a Wheatstone bridge configuration. High-quality load cells use temperature compensation resistors and materials with low thermal expansion to minimize drift. Load cell stability is critical. If instability between calibrations is 0.2%, claiming 0.1% system accuracy simply isn't valid.

Extensometer or Displacement Sensor: Measures specimen elongation or compression. Optical encoders provide position feedback, though interpolation between physical scale marks can introduce cyclic sub-divisional errors that must be characterized and compensated.

Grips/Fixtures: Secure the specimen without inducing stress concentrations or slippage that would invalidate test results.

Data Acquisition System: Samples sensor signals at high rates (1000–5000 Hz), ensuring dynamic events are captured without aliasing.

Software Translation Layer

Raw sensor data becomes validated results through purpose-built software that generates real-time force-displacement curves, calculates material properties (tensile strength, yield point, elongation, and more), and controls test parameters to ensure repeatability across runs. Test methods are programmed to follow standards like ASTM E8 for tensile testing, with automated compliance checks ensuring each test meets protocol requirements.

Types of Tests for Manufacturing Validation

Electromechanical systems support a range of test types, each targeting specific failure modes or material behaviors:

- Tensile Testing: Pulls a specimen to failure, revealing ultimate tensile strength, yield strength, and elongation. Validates that incoming materials meet specification before they enter production.

- Compression Testing: Applies crushing force to measure how materials respond under compressive loads. Common for concrete cylinders, foam cushioning, and structural components.

- Flexural/Bend Testing: Loads a supported beam to measure bending resistance. Particularly relevant for plastics, composites, and engineered wood products.

- Fatigue Testing: Applies cyclic loading to determine how many cycles a part survives before failure. A requirement in automotive, aerospace, and medical device validation where components face repeated stress.

Why Machine Calibration is Prerequisite

If the load cell reads 2% high or the encoder drifts, every test result produced by that machine is suspect. Calibration of the electromechanical testing machine itself is a prerequisite for valid results, connecting machine validation to the broader quality assurance chain. Without regular calibration, the data reflects instrument error as much as material behavior.

Key Measurements and Standards in Precision Testing

NIST Traceability Explained

NIST traceability means calibration measurements can be traced through an unbroken chain of comparisons back to national or international standards. That traceability proves your measurements are defensible during customer audits, regulatory inspections, and warranty disputes — far more than a compliance checkbox.

For Portland OR and SW Washington manufacturers, Sarkinen Calibrating provides measurements traceable to NIST and/or International Standards, giving local facilities audit-ready calibration documentation without shipping equipment out of the region or waiting weeks for off-site lab results.

The 10:1 Calibration Ratio Rule (and Why It's Evolving)

Historically, the 10:1 Test Accuracy Ratio (TAR) dictated that calibration standards should be 10 times more accurate than the device being calibrated. As modern instrumentation has become increasingly precise, finding standards at that margin is no longer technically feasible.

The industry has shifted to Test Uncertainty Ratio (TUR), which divides the tolerance by the 95% expanded measurement uncertainty of the calibration process. Higher TUR values deliver greater confidence in results — Sarkinen Calibrating achieves accuracy to 1.0 parts per million (one millionth of an inch per inch), well beyond the traditional ratio. When measurement uncertainty is large, guard-banding (per ILAC G8:2019) narrows the acceptance zone to keep false-accept risks below acceptable thresholds.

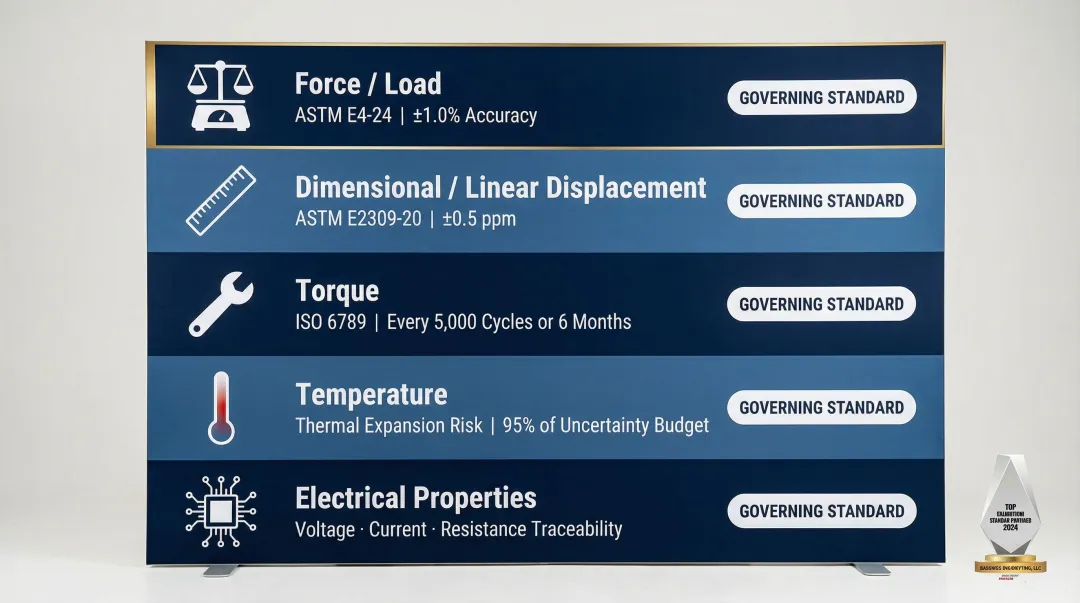

Primary Measurement Parameters

Five parameters drive precision testing accuracy:

- Force/Load — Verified per ASTM E4-24; requires ±1.0% accuracy, traceable to SI units. Applies to UTMs, load cells, and force testers.

- Dimensional/Linear Displacement — Verified per ASTM E2309-20. Laser interferometers achieve ±0.5 ppm for CNC machine tool volumetric calibration.

- Torque — Verified per ISO 6789; recommends testing every 5,000 cycles or 6 months to prevent fastener failures.

- Temperature — Uncompensated thermal expansion can consume over 95% of an allowable uncertainty budget. Temperature-compensated strain gauges and strict environmental controls are essential.

- Electrical Properties — Voltage, current, and resistance measurements must be traceable to maintain calibration integrity in electronic testing equipment.

Governing Standards (2026 Current Versions)

| Standard | Scope | Key Requirement |

|---|---|---|

| ISO/IEC 17025:2017 | Lab competence & impartiality | Required for issuing credible calibration certificates |

| ISO 9001:2015 / 2026 draft | Quality management systems | Emphasizes risk-based thinking and organizational resilience |

| ASTM E4-24 | Force calibration, static/quasi-static machines | ±1.0% accuracy, SI traceability |

| ASTM E2309/E2309M-20 | Displacement measuring systems | Verification procedures for material testing machines |

| ISO 10360 Series | CMM performance evaluation | Replaces withdrawn ASME B89.1.12M; reference in all CMM procurement contracts |

| ISO 6789 | Torque tool calibration | Testing every 5,000 cycles or 6 months |

The Real Cost of Uncalibrated Machines

Compounding Effect of Measurement Drift

A machine slightly out of calibration doesn't just produce one bad part—it systematically skews every part produced during the interval. The compounding effect means a 0.002-inch positioning error on a CNC machine might result in hundreds or thousands of out-of-spec parts before the problem is discovered, generating scrap, customer returns, or warranty claims that dwarf calibration costs.

Industry estimates put the cost of poor calibration quality at $1,734,000 annually for manufacturers, rising to $4,000,000 for companies with revenues over $1 billion. One case study found that simply adding visibility and accountability to calibration-tied cost-of-quality metrics reduced scrap and rework by several million dollars.

New Machine Acceptance Risk

Without calibration testing upon delivery, a machine may not meet specifications promised by the manufacturer while still under warranty. Manufacturers may be accepting equipment that underperforms without knowing it—missing the window to demand corrections or replacements at the vendor's expense.

New machines often require adjustment following shipping and installation. Verifying performance before that warranty window closes protects your investment and establishes a documented baseline for future tracking.

The Financial Penalty of Unplanned Downtime

Unplanned downtime due to equipment failure or measurement drift carries serious financial penalties. According to Siemens' 2024 True Cost of Downtime report, unplanned downtime costs the world's 500 largest companies $1.4 trillion annually—equivalent to 11% of their revenues.

Sector-specific impacts include:

- Automotive: Production line downtime costs have doubled since 2019, now reaching $2.3 million per hour (over $600 per second)

- Heavy Industry: Downtime costs have quadrupled since 2019, reaching $59 million annually per plant

- General Manufacturing: U.S. manufacturers lose an average of $260,000 per hour of downtime

When precision testing equipment drifts out of tolerance, the result is false acceptances (passing bad parts) or false rejections (scrapping good parts). Either way, the damage shows up in scrap rates, customer returns, and a reputation that takes far longer to rebuild than the calibration interval that caused it.

What to Look for in a Precision Calibration Service

Core Evaluation Criteria

When vetting calibration providers, these criteria separate capable partners from checkbox vendors:

- ISO/IEC 17025 accreditation — Demand explicit measurement uncertainties (expanded, k=2) on every certificate. Generic "NIST traceable" claims without documented uncertainty values will trigger audit deficiencies.

- Calibration uncertainty ratios — The provider's CMC uncertainty must be smaller than your required tolerance. Providers measuring to 1.0 parts per million go well beyond the standard 10:1 rule and deliver meaningfully higher confidence.

- Turnaround time — Every day equipment sits in a calibration queue is a day of lost production capacity. Faster turnaround translates directly to reduced downtime costs.

- Equipment-specific experience — A provider familiar with CNC routers, rotary axis machines, and CMMs understands the failure modes specific to your equipment, not just generic procedures.

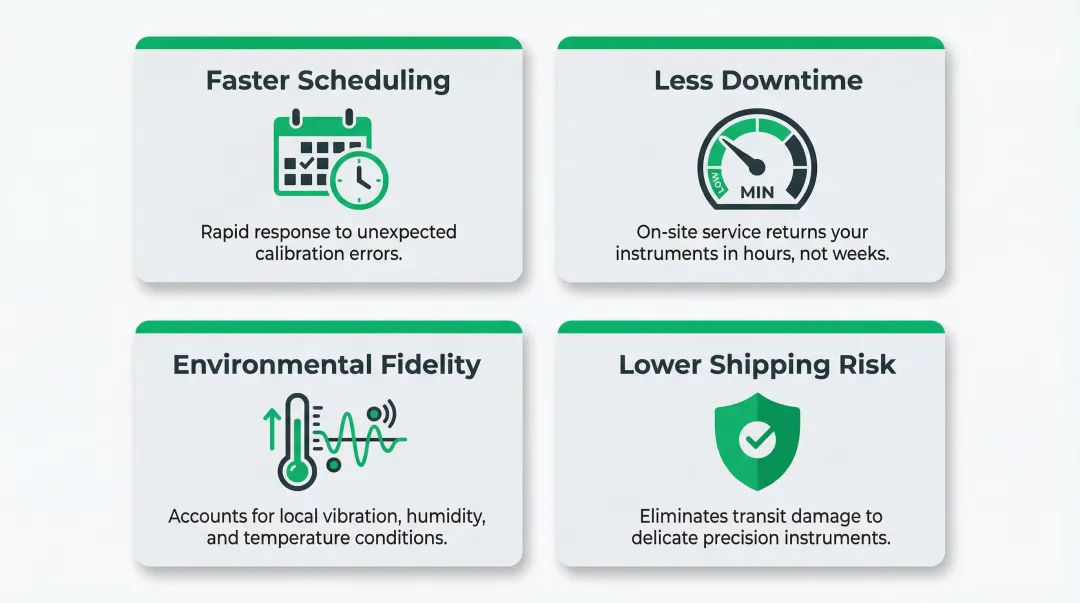

The Local Calibration Advantage

Working with a local calibration provider offers practical advantages that reduce costs and keep production moving:

- Faster scheduling — Local providers can respond quickly when a machine shows unexpected errors, shrinking the window between problem detection and resolution.

- Less downtime — On-site calibration eliminates days lost to packing, shipping, and lab queues. Instruments return to service in hours, not weeks.

- Environmental fidelity — Calibrating instruments in their actual operating environment accounts for local variables like vibration, humidity, and temperature swings, improving real-world accuracy.

- Lower shipping risk — Sending delicate CMM artifacts or load cells out-of-house introduces shock and transit damage risks that on-site service bypasses entirely.

Sarkinen Calibrating serves Portland OR and SW Washington manufacturers with this local model. Founder Larry started as a machine operator, which shapes how the company approaches every engagement: calibration as a production-efficiency tool, not just a compliance requirement.

Proactive vs. Reactive Calibration

Look for a provider who treats calibration as a proactive maintenance discipline rather than a reactive fix. The right partner helps establish calibration intervals based on risk assessment (per ILAC G24:2022), interprets trends in machine performance, and flags potential issues before they cause downtime.

While annual calibration is a common default, intervals should be determined based on the equipment's drift history, severity of use, required measurement uncertainty, and environmental conditions—not arbitrary calendar dates. A provider who understands your production demands can tailor schedules that balance risk and operational continuity.

Frequently Asked Questions

What are the different types of precision testing machines?

The main categories include electromechanical and hydraulic universal testing machines (material property validation), coordinate measuring machines (dimensional verification), laser interferometers (CNC axis calibration), torque testers (assembly tool verification), and force measurement systems. Each validates a different property with traceable accuracy.

What are some examples of precision equipment?

Common examples include digital calipers, micrometers, CMMs, laser interferometers, load cells, and calibrated force gauges. What qualifies as "precision" equipment is defined by measurement resolution and traceability to recognized standards — a $50 caliper only qualifies if it's been calibrated with documented uncertainty.

What is the most precise measuring device?

Laser interferometers and atomic force microscopes represent the highest levels of dimensional precision available, capable of measuring at the nanometer scale. The Renishaw XL-80 laser interferometer achieves ±0.5 ppm linear accuracy with 1-nanometer resolution. For most manufacturing work, instruments accurate to one millionth of an inch — as used in NIST-traceable calibration — are the accepted standard.

Why is NIST traceability important for calibration?

NIST traceability provides an auditable chain of measurement accountability back to national standards, required for regulatory compliance, customer contracts, and proof that a machine's output meets specified tolerances. Without documented traceability, calibration certificates carry no independent verification and will not hold up during audits or contract disputes.

How often should precision testing machines be calibrated?

Most precision equipment in active manufacturing is calibrated annually at minimum. ILAC G24:2022 requires intervals to be risk-based rather than arbitrary — higher-use or higher-stakes machines need more frequent service. Torque tools, for instance, should be verified every 5,000 cycles or 6 months per ISO 6789.

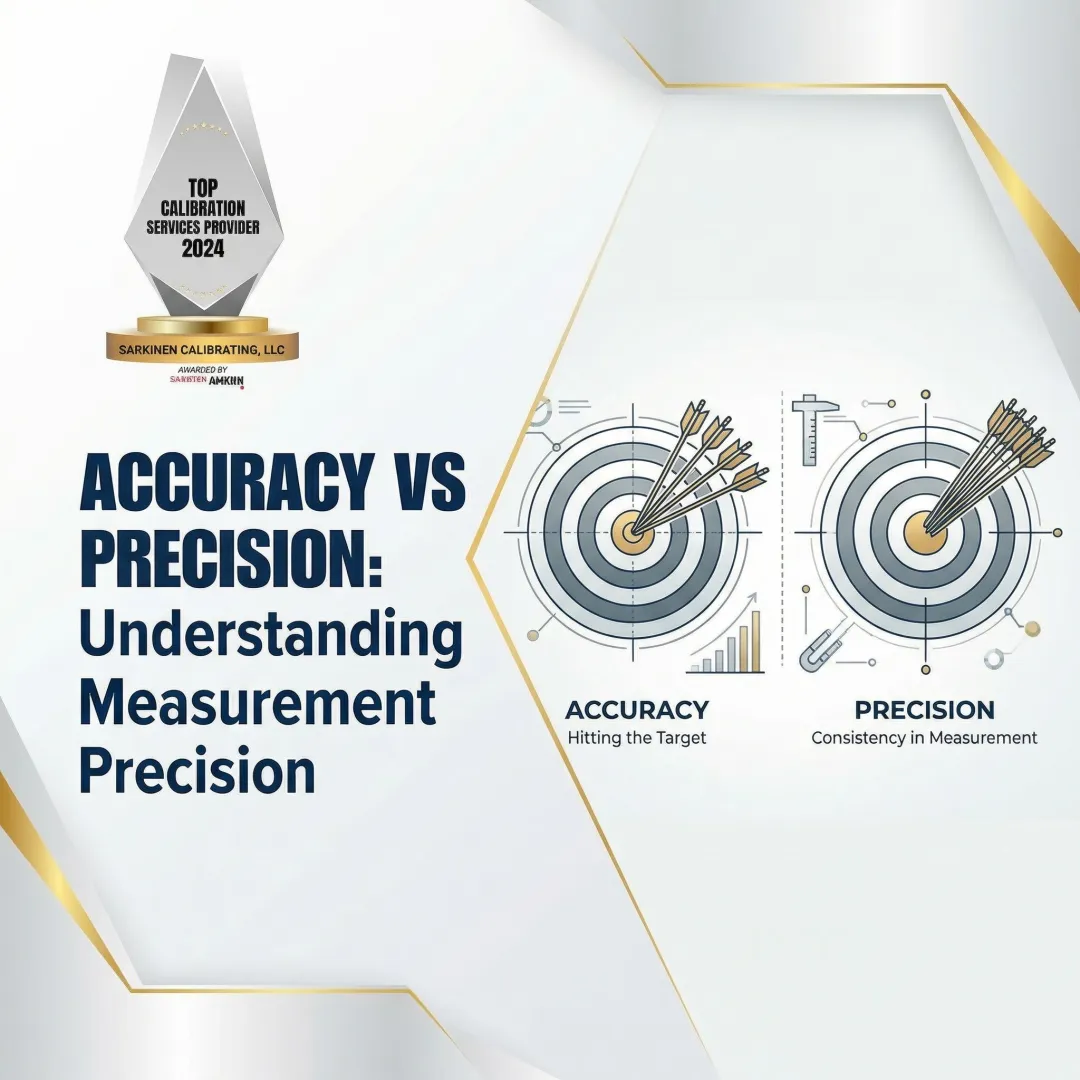

What is the difference between precision and accuracy in measurement?

Accuracy refers to how close a measurement is to the true value (hitting the target), while precision refers to the repeatability of that measurement (tight grouping). A machine can be precise (consistent) but inaccurate (consistently wrong), or accurate on average but imprecise (scattered results). Both must be validated through calibration to ensure reliable production quality—precision without accuracy produces consistent defects.